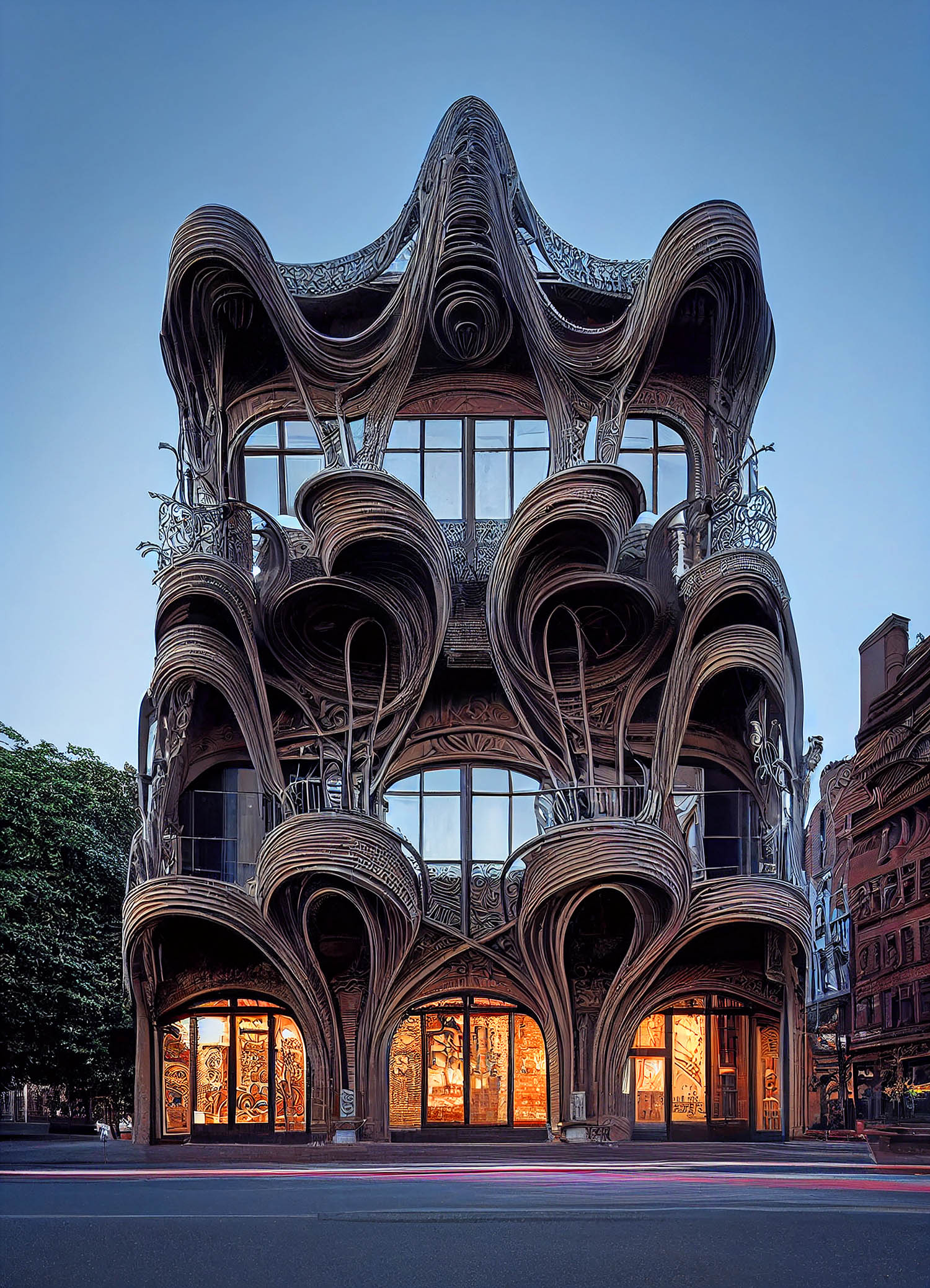

Social media has been alive with an explosion of realistic AI-generated art, created by inputting natural language descriptions into AI tools DALL-E, Midjourney, Latent Diffusion and others. Hassan Ragab has quickly established himself as one of the most prolific and coherent architectural AI concept artists

Generating AI art is pretty straightforward; it’s based on text descriptions and biases. Want to see what Gaudi would have created if commissioned to design a petrol station? Simply type ‘Gaudi gas station’ into one of the many AI generators and that’s exactly what you will get. We can now get conceptual architecture by merely writing a string of descriptive words. While this might seem like magic, the real challenge is trying to produce iterations of design variations, while maintaining a level of consistency, refining the word ‘recipe’.

Over the past few months, Hassan Ragab has been posting his Midjourney conceptual architectural work on LinkedIn and is clearly enjoying exploring the nuances of refining the AI output, mixing free flowing architectural styles with biomimicry materials such as feathers and plant structures.

Ragab has a background in generic design, and experience in everything from furniture design to digital fabrication. He is currently working in construction in downtown Los Angeles, using his computational design skills with Rhino and Grasshopper.

“For me, AI generated art is about pushing your idea, pushing what you want to do and not actually coming up with something that’s entirely out of the world, that looks cool,” he explains. “I’m really interested in designing facades, and having weird, or very interesting shapes interact with it,” adding that he sometimes explores other areas architectural design, such as interiors.

By creating so many variations on a theme, does Ragad feel he has control of the AI generation process? “I don’t think anyone would be able to fully control the outcome of these generators,” he says. “You have only a certain amount of control. But again, that’s the beauty of using them! You don’t want to use them to create a certain thing, you don’t want them to create something that’s in your head, you want them to push your idea, to have another perspective, another outcome.

“However, the more I work with Midjourney, the more I feel the need for control, because when you’re very ambiguous about what you want, the AI has biases and that’s why many people [are] having similar results. The main reason for that is that they are not being really specific enough.

“The way I use the prompts is really important,” adds Ragab. “Again, it’s about building from the bottom up, using simple prompts. This is a good way to keep the ideas in control. But the main element of control is within the creation process, is to be specific with your definitions. But at some point, I might get this really, really cool output and that will change my direction entirely!”

Ragab explains how he uses branching to refine designs. “Sometimes I go into different branches in parallel and, if I like the output, I’ll tweak my prompts based on the ones I like. I have to improvise all the time on how I generate my prompts, while also trying to stay in control. And no matter how much I feel in control, I always get surprised. But again, that’s actually really what I want!”

From talking with Ragab, it’s clear that a certain mental approach to trial and error helps. To get to the images displayed on these pages, Ragab would have gone through typically generating 100 image iterations and word definition / bias experiments before being happy with the end result.

With the AI artist having total control through prompts, it’s also important for the scene to be set. Many users forget that the framing and context of what’s generated is also controllable, as Ragab explains, “There are certain elements that I always define, for example, like the image angle, close-up or zoomed out. If I want to make a realistic photograph, for example, one way is to put your building in context, with streets, people and cars.”

In our conversations with Ragab, we briefly talked about AI design and a possible future where AI applications actually create 3D geometry, as opposed to images. “I know at some point this technology will drive architecture and it will be fascinating. I think it’s very important for everybody to understand this technology. This will affect architects and designers, at some point, so it’s really time to learn how these generators work, the limitations, the biases,” he explains. “This is a preparation period as AI will have a major impact when there is a merger between AI and 3D model generation. I think things will get very chaotic, very quickly and we need to be prepared for that. So right now, I’m just focusing on how can I control it, how can I understand how to deal with it and what are the limitations.

“AI is not a threat to artists; it’s a threat to the skill of the artist – the skills that are acquired. In my opinion, art is a mix between skill and spirit, and the spirit is more important to the artist. AI kind of gives accessibility to a lot of people who don’t really have those artistic skills. Anyone can now produce that kind of art. That’s the real threat. If you’re a true artist, in my opinion, then you will find a meaningful way to use these AI tools in your own workflows.”

■ Instagram www.instagram.com/hsnrgb

■ Website www.hsnrgb.com

■ LinkedIn www.linkedin.com/in/hsnrgb