Omniverse Cloud services brings design collaboration to teams regardless of hardware

Nvidia is looking to make its Omniverse collaboration platform more accessible to a wider audience with the introduction of Omniverse Cloud. The new service, announced today at Nvidia GTC, allows design teams to collaborate using GPU-accelerated virtual machines without having to invest in IT infrastructure.

Among Omniverse Cloud’s services is Nucleus Cloud, a “one-click-to-collaborate” sharing tool that enables designers to access and edit large 3D scenes from anywhere, without having to transfer massive datasets.

Nucleus Cloud was first shown at CES earlier this year, but the big news for GTC is that support for Omniverse Cloud will now extend to Omniverse Create and Omniverse View.

It means that Omniverse Create, for interactively building 3D worlds in real time, and Omniverse View, an app for non-technical users to view Omniverse scenes, can now have full simulation and rendering capabilities streamed using the Nvidia GeForce NOW platform, powered by Nvidia RTX GPUs in the cloud.

Designers can ‘instantly’ invite other collaborators to join a session by sending a link. And those collaborators don’t have to own Nvidia RTX hardware. They can use any device to access the service – Chromebook, Mac, or tablet.

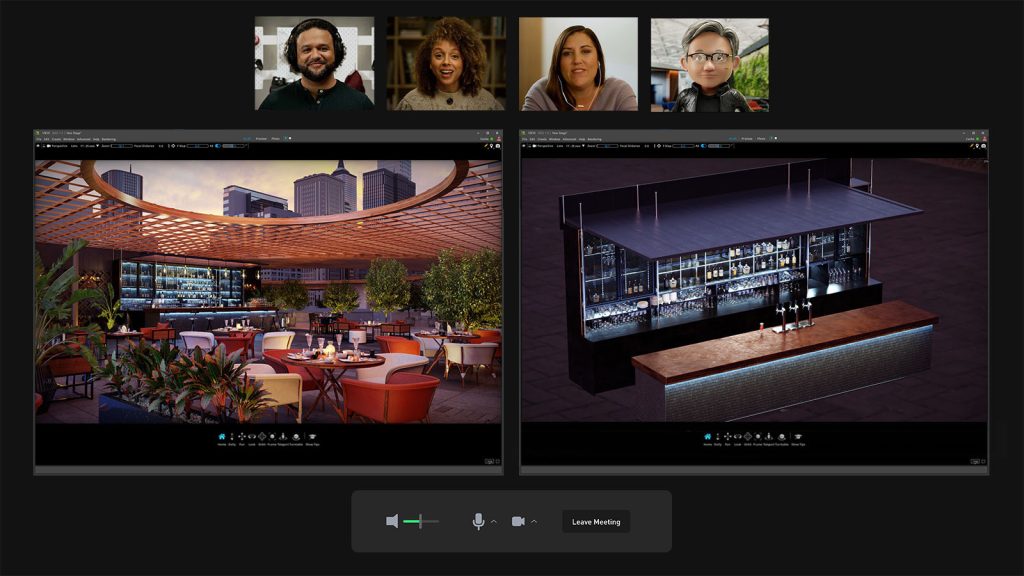

In his keynote address at Nvidia GTC today, Jensen Huang, founder and CEO of Nvidia, showed a demo of the future of design featuring three human designers and one specialist Omniverse Avatar AI designer collaborating virtually in Omniverse Cloud, making design changes to an architectural project.

The team conversed using a standard web conferencing tool, while connected in a scene hosted in Nucleus Cloud. One human designer ran the Omniverse View app on their RTX-powered workstation, while the other two streamed Omniverse View from GeForce NOW to their laptop and tablet.

“At KPF, a global leader in architectural design, we value the ability of our designers to collaborate as seamlessly as possible by making cloud-first technologies available to them when they need it,” said Cobus Bothma, director of applied research at Kohn Pedersen Fox Associates. “Omniverse Cloud fits perfectly into that practice with the promise of excelling our visual and 3D design collaboration abilities by enabling our teams to work in Omniverse from any device, anywhere.”

Omniverse platform enhancements

There have also been several new developments to the Omniverse platform in general, including an expansion to products that plug into the Omniverse ecosystem. These include a new connector to Maxon Cinema 4D and an enhancement to the workflow with Unreal Engine.

Bentley Systems has also announced the availability of visualisation software LumenRT for Nvidia Omniverse, powered by Bentley iTwin. According to Richard Kerris, VP of Omniverse, this is very different to the many plug-ins available for the platform, as he explained to AEC Magazine. “This is them [Bentley Systems] committing to and using Omniverse as a compute engine underneath the hood of their products. So, what customers will experience is using LumenRT, but the benefits they’ll get are from the technology that Omniverse provides to them.

“This is the first in a number of companies that we’ve been in active discussions with about using the compute engine of Omniverse to power their next generation product,” he added.

Rendering support has also been enhanced in Omniverse and users can now integrate and toggle between their favourite Hydra delegate-supported renderers and the Omniverse RTX Renderer directly within Omniverse Apps. Betas are available for Chaos V-Ray, Maxon Redshift and OTOY Octane, with Blender Cycles, Autodesk Arnold coming soon.

Omniverse for digital twins

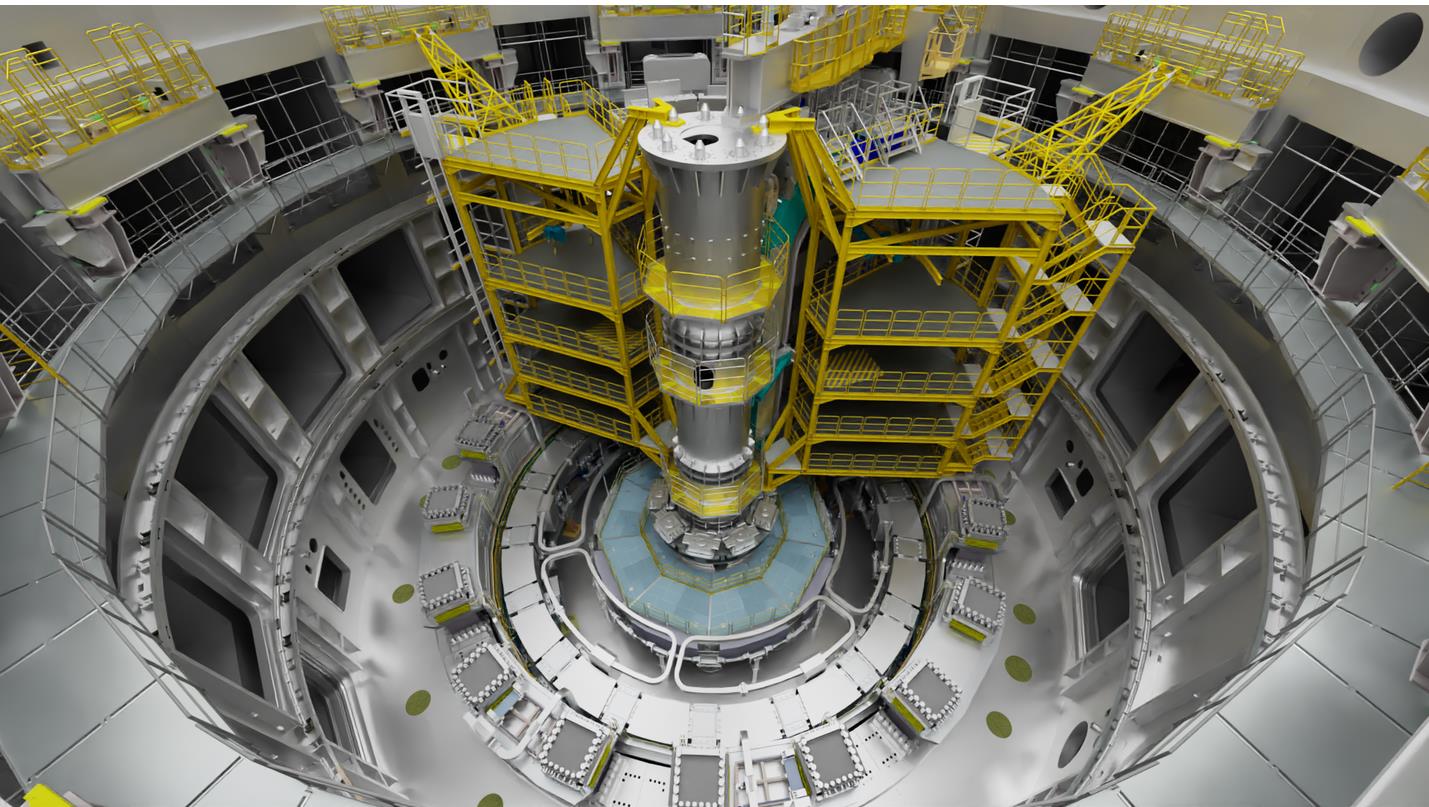

Nvidia is seeing a significant growth opportunity for using Omniverse with digital twins. In addition to plugging in design data, Kerris explains that sensors for digital twins such as LIDAR scanning, ultrasonic and image sensors, are now connecting to the platform.

“You can use a mobile device today and go use the LiDAR scanner that’s in the current models of many of them, and capture data that’s USD data and bring it into Omniverse, for example.” he says.

“We’ve started a concentrated effort to talk with a number of the companies that are making these sensors and other companies that do ecosystems out to those sensors as well.

“The idea is anything that can be connected should be able to be connected to Omniverse.”

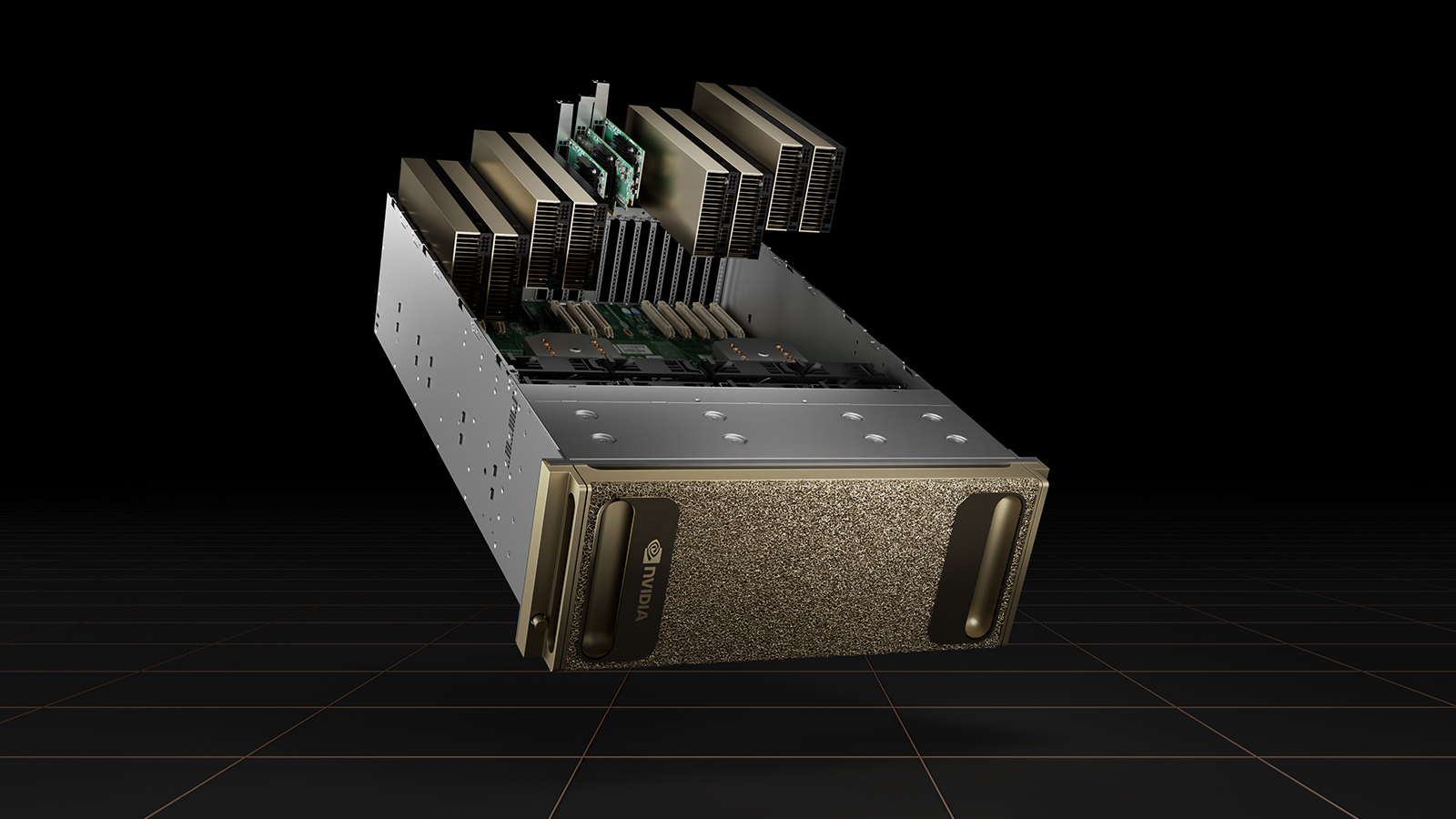

To help process these huge datasets, Nvidia announced Nvidia OVX, a computing system architecture designed specifically to power large-scale digital twins. According to Nvidia, the OVX server, which consists of eight Nvidia A40 GPUs, is built to operate complex simulations that will run within Omniverse, enabling designers, engineers and planners to create physically accurate digital twins and massive, true-to-reality simulation environments.