Digital Twin is a term we’ve heard many times, in many different contexts, meaning many different things and there’s the danger of it just being used to replace the highly overused ‘BIM’. Bentley has a vision that might not clarify its use but certainly a technology backbone to support it. Martyn Day and Greg Corke report.

The term Building Information Modelling (BIM) is at least 20 years old and, as terminology goes, it’s become less of a differentiator. As always happens, fresh workflows and tools come along and have new buzz words and marketing and, in our space, the concept of the Digital Twin has somewhat taken over. While BIM is a process that typically delivers a 3D model of a design, in essence the Digital Twin takes this model and augments it with data for performance simulation or for maintenance over the lifecycle of the building or constructed asset.

In simple terms, this makes perfect sense. However, what was designed is never exactly what gets built and what gets modelled doesn’t necessarily match the sequencing or make-up of the physical construction. A ‘Digital Twin’ cannot be a twin if it doesn’t truly match the as-built asset.

If this level of accuracy is achieved, then how can the Digital Twin be utilised? To be a true Digital Twin, does the physical building or asset need to be connected ‘live’ through Internet of Things (IoT) sensors or would it suffice to have a reality model captured every few months to monitor and assess changing conditions? There are many questions and, unfortunately, we have an industry that is very adept at jumping on jargon bandwagons and obfuscating any original meaning.

Bentley Systems is into Digital Twins in a big way. Much of the messaging at the 2019 Year In Infrastructure (YII) Conference in Singapore was around establishing workflows to create Digital Twins and to leverage those data assets across all sorts of projects from civils, architecture, plant and transport.

Company CEO, Greg Bentley explained, “Our endeavours till now have made software to produce useful deliverables, but therefore a static and dated purpose. Our objective in Digital Twins is to make the value of those deliverables endure over the longevity of the asset and have evergreen visibility into what has been ‘dark engineering’ data to synchronise its changes over time and to open up this dark data for immersive visualisation.

“But even more importantly, the visibility of analytics, including analytics over time for Digital Twin is that fourth dimension, 4D.”

Bentley went on to say how the combination of CAD, GIS, scan and BIM data to make a Digital Twin can help simulate and explain real world performance, when combined with IoT sensors. For Bentley Systems, Digital Twins are predominantly about providing benefits to the owner / operators.

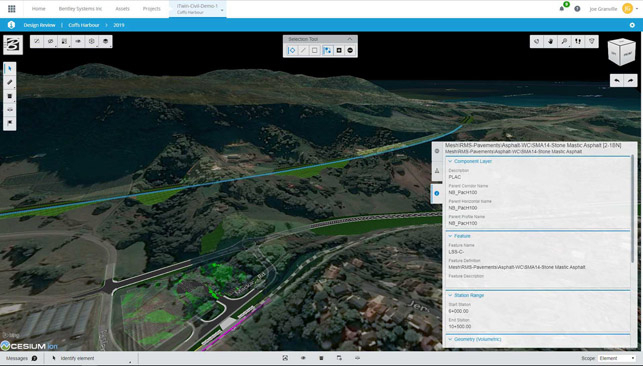

Obviously, this data is only a twin if we continue to synchronise and maintain a digital chronology over a lifecycle. In previous years, we have seen Bentley focus heavily on beefing up its reality capture capabilities (photogrammetry or laser scan to mesh). Now the firm has the technology and service capability to scan and capture highly accurately at building or city scale, infrastructure and built assets and convert them to ‘Reality Meshes’. Using this technology and Bentley’s iTwin Services — a platform for managing and consuming infrastructure digital twins — it’s possible to keep both the original BIM model and the reality mesh from the actual asset in sync.

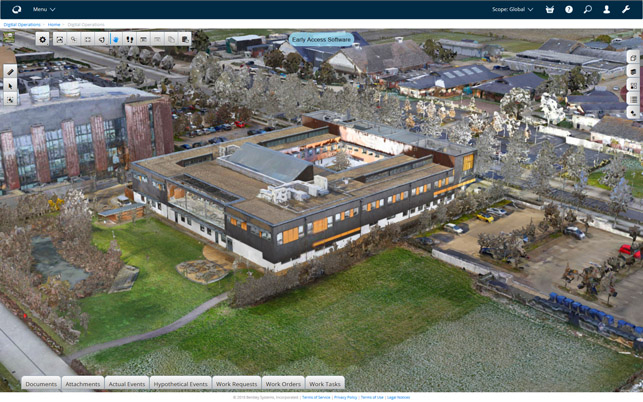

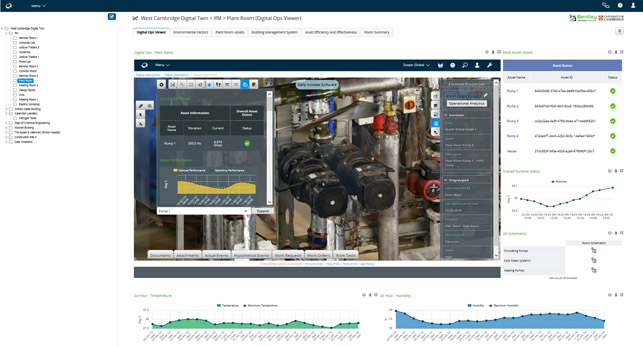

In many ways, the UK, for all the negativity aimed at its construction market’s efficiency, has led the charge in adopting BIM and now Digital Twins. Greg Bentley heaped praise on the efforts of the Centre for Digital Built Britain at the University of Cambridge, which has been helping define Digital Twin workflows. Using its own West Cambridge campus as a guinea pig, researchers have utilised ContextCapture reality modelling to create a 3D model for its Digital Twin. This is then managed by Bentley’s AssetWise, together with AssetWise Operational Analytics, to give insights into the collected IoT data and to help to predict asset failures or operational events.

The West Cambridge project is a pilot for the National Digital Twin, built on the ‘Gemini Principles’. Offering guidance, specifications and standards, the Gemini Principles were defined in 2018 by a task group from the Open Data Institute, co-founded by the inventor of the web Sir Tim Berners-Lee and Artificial Intelligence (AI) expert Sir Nigel Shadbolt. In short, Twins must have a clear purpose, be trustworthy and function effectively.

Considering the scale and diversity of data/systems used throughout the UK, delivering a monolithic twin for the whole of the country would be impractical and undesirable. The plan for the National Digital Twin is to create a secure, federated twin, an ecosystem of digital twins owned by different asset owners and connected by securely shared data. These assets/systems would be represented at different levels of granularity. High quality, standardised data and seamless interoperability is of paramount importance (read our National Digital Twin article).

As one would expect, Bentley is very focused on interoperability and relies on the open source iModel.js library that is designed to be easily used and integrated with other systems. At its core, iModel.js is a wrapper for all sorts of data from all sort of sources. When used in conjunction with iModelHub, design tools (even Autodesk’s Revit) can store all the design changes made to any through all phases of the design process, giving insight into the how it came to be.

iModel.js can also handle incredibly large models and this is important when identifying the key target markets for Bentley’s Digital Twin technology, as Keith Bentley, CTO explains, “In the UK, it’s the transportation industry, trains and road (Highways England), where you would have a really large model. It would be much larger than can be managed on a desktop computer or an application that tries to load it all in memory.

“Civil applications are the second largest Digital Twin use case that we see, such as using drones and AI to identify cracks for predictive maintenance and mapping that back to the Twin. Then there’s commercial buildings and campuses. The city models that we’ve been shown are another good example. But a Digital Twin of a city is a different beast to a twin of a plant.

“City models require context information and you have GIS data, you have building outlines, but it would not be as detailed as for plant. But here, companies involved in the water system would like to be able to have a holistic view of how the city is working and would like to see a connection between these context models, plus their real model of the water system or the subway system. These are the things people are trying to build.”

Creating twins

Much of Bentley’s YII event concerned how firms go about implementing a workflow to build useful Digital Twins. Santanu Das, SVP, design integration business unit showed a plan with five stages of maturity to achieve Digital Twin execution.

It’s possible to start by simply referencing your asset as to where it is located on Earth. The next phase is to aggregate the associated data into a 3D model and host it on the cloud for collaboration. Stage three is to add a 4D timeline. Stage 4 is all about connecting IoT devices to the real-world asset. The final stage is to use analytics to get visibility of real-time performance which can be linked back to the Digital Twin to provide automatic reports, warnings or to schedule predictive maintenance.

Das makes the point that you don’t need to get to stage five for it to become a Digital Twin. “It just means that at any particular stage I keep augmenting my particular asset with these stages of sophistication,” he says.

In many respects, a lot of the confusion and blurring of meaning within ‘Digital Twin’ comes in that fact that the start point really is in the creation of a BIM model and to stand any chance of attaining Digital Twin nirvana, collecting and referencing all the data from planning, through design and construction to operation.

In the real world, the BIM model is mainly used to create drawings for construction, and perhaps with a COBie file alongside if you’re are lucky, which provides a list of serviceable items in a building. Of course, there might also be a construction BIM model too. A Digital Twin is layers and layers of augmentation on top of the original 3D model and requires a holistic approach to data gathering and storing.

Bentley has a concept of Project Digital Twins and Performance Digital Twins and this is perhaps a more complex definition to get your head around. A Project Digital Twin is the front-end work involved in the design and construction of any asset. This is predominantly but not exclusively what most people call today the BIM process, which creates a multi-layered model with associated drawings and project information. It could also have a 4D timeline to provide an accountable record of who-changed-what-and-when, as well as simulating construction, logistics, and fabrication sequences.

A performance Digital Twin is for the as-built asset, for simulation, operations, over its lifecycle and is created from this original BIM design data plus, in some cases, a reality model of what actually got built. While there is probably only one Project Digital Twin, there can be multiple Performance Digital Twins created from that data to suit multiple lifecycle tasks, should that be monitoring energy usage, pedestrian flow, space utilisation, traffic flow, facilities management etc. In a performance Digital Twin, Artificial intelligence (AI) or machine learning (ML) can also be used for analytics to gain visibility and insight and to help enhance the effectiveness of the asset.

Obviously, there are a lot of buildings and infrastructure that are already in existence and were probably developed using a traditional 2D drawing process. In this case, the project would be a reality model, created from a laser scan or photogrammetric survey to capture the as- built. If an intelligent model is required, this may mean re-modelling from the scan data. Both of these approaches are being explored in the University of Cambridge project.

With so much data being required to be collated together, this is where Bentley iModel.js technology comes in, as it was architected to process data in the cloud into a format which has multi-resolution representations, in much the same way that Google Maps works. If you zoom into a region you get a more detailed view. With iModel.js there can be multiple models with different degrees of tessellation, but all have the underlying knowledge of versions, links and relational data. This has almost infinite scale and could be as large as a country. This can only be done on a server.

The benefits

On the benefits of using a Digital Twin, Bentley brought out some heavy hitters at YII, most notably Shell. Sada Iyer, Shell’s VP projects & engineering explained how the language in the energy market has changed. It used to be all about capital efficiency, but now it’s about lifecycle optimisation.

For Shell, a Digital Twin is the virtual representation of the physical elements and the dynamic behaviour of the asset through its lifecycle. It starts with the 3D model and gets hooked up to the project execution plan through visual tools, enabling digital rehearsals, leading to better construction planning, critical path analysis and then all this data flows over into an as-built Digital Twin.

This is linked to dynamic simulation models and process analysis. If changes need to be made because of a new process, the Digital Twin can be used to see what effect it might have. This gives Shell much better up time and optimisation of its facilities.

There is also a digital transformation going on at Microsoft around smart buildings. In Singapore, Microsoft occupies six floors of Frasers Tower and the company has wired up the building to a Digital Twin on its Azure cloud to test out twin technology and apply what it learns to its own office layout and operations. The system gives real-time updates every five minutes.

Microsoft has also been eating its own dog food closer to home at its Redmond campus. With a view to driving down costs, it connected all 145 buildings with two million sensors (although it subsequently found that only a quarter of those sensors were relevant). By running the data through thousands of machine learning algorithms, it was able to prioritise what things should be fixed in what order, based on the potential cost impacts on the business. To run the system cost £2million a year, but it paid for itself in 18 months.

Microsoft has also been doing research into how its buildings are being utilised, using IoT sensors to monitor occupancy levels at different times, the energy used in each space and if the building is unnecessarily consuming energy when no-one is in it.

While it may sound a tad Big Brother, Microsoft has analysed data in Office applications to see which teams communicate the most, so they can place them closer together in the building(s) to cut down on travel time, as well as analysing usage of meeting rooms and who uses them the most, or books them but doesn’t turn up. Data like this can help owners see whether a building is being used in the best way. And if it isn’t, change it up to make it more efficient. In some buildings Microsoft discovered it was 40% oversubscribed in terms of the space it actually needed. This will help it plan future office requirements and bring down asset costs dramatically.

Microsoft is also using pedestrian simulation on its campus to determine things like how long it would take people to exit a building. Predictive modelling could also help explore the impact of moving a subway station one block north, in terms of pedestrian movement, street traffic parking, or even commerce.

One of the YII award winners, CCC (China Communications Construction) First Highway demonstrated how it modelled a 68km corridor that included 30 to 40 bridges and had a budget of approximately $7 billion. The company started with a Digital Twin of the existing linear asset which was optically captured to generate a reality model of the existing road and terrain. Conceptual design was done in Bentley OpenRoads ConceptStation, and then moved into OpenRoads to complete the design. Post construction, the operator uses the same model to do traffic simulation, in case they need to do widening in the future.

Singapore itself also has a project to make a Digital Twin. The country is spending $73 million to build a twin of the actual city, down to individual road signs. This will be used for designers, planners and policymakers to explore future buildings and infrastructure scenarios, together with other uses such as mapping the mosquito-borne Dengue fever outbreaks over time, looking at sustainability projects and new traffic measures.

Conclusion

Bentley Systems is really betting the farm on selling Digital Twin workflows to owner / operators that want to have digital dashboards and live performance information from their assets. These are plant, road, rail, campuses, water, power and major building owners that have pre-built and future assets. Bentley has had to tackle both problems with developing technology for laser scanning and photogrammetry as well as supporting rich and complex data structures.

There are still some questions to be answered, such as does a Digital Twin need a detailed 3D model? In reality, it probably only needs to have the information that is relevant to what you are trying to achieve with your twin.

Also, have you really got a Digital Twin if you don’t deploy ‘live-link’ IoT devices and if not using IoT, how regularly should your asset be surveyed or inspected, and the data refreshed? In the future this will likely be automated, but until this happens the danger will be in not continually investing in the upkeep of the digital asset. There is a lot still to define, such as best practice and it will be different horses for different courses.

As is the norm with Bentley Systems, looking back over five years (or more) of development work, you can see how the company has built-up and fleshed out the backbone of this ‘iTwins’ technology, starting with jumping both feet into reality capture, all its asset management tools, the launch of an open source iModel variant (iModel.js) last year, the Azure server- level infrastructure and the placement of its analysis tools in the cloud.

It might not have seemed all that clear at the time but at this year’s YII, it slapped you right in the face. This has been a very long-term vision and is a very smart business angle to run. BIM struggles to get owners excited. However, major infrastructure owners obviously see the value in the longevity and maintenance of their assets and can save money through their efficient management through the lifecyle. Digital Twins in this context make a lot of sense. Now we just need to stop marketing people using it interchangeably with BIM.

What is a Digital Twin?

According to Bentley Systems, a Digital Twin is a digital representation of a physical asset, process or system, which allows us to understand and model its performance.

Digital Twins are continuously updated with data from multiple sources — which is what makes them different from static, 3D BIM models.

Digital Twins of new assets are sourced from the design BIM data and can then be augmented with a scan of the as-built reality. Existing infrastructure and buildings can be modelled through photogrammetry or laser scans to deliver a reality mesh. Alternatively, an as-built BIM model can be created.

In the UK, the Centre for Digital Built Britain (cdbb.cam.ac.uk), a partnership between the Department of Business, Energy & Industrial Strategy and the University of Cambridge, has developed ‘The Gemini Principles’, a set of proposed guides for the development of the National Digital Twin and an Information Management Framework that will enable it.

The CDBB has identified the some of the key benefits of Digital Twins as the following:

• Integrating city-scale data to optimise power, waste and transport.

• Better asset maintenance using predictive data analytics.

• Improving organisational productivity. • Better asset tracking.

• More efficient use and management of equipment.

• Finding ways to reduce energy consumption.

• Using augmented reality to help with maintenance and inspection.

If you enjoyed this article, subscribe to our email newsletter or print / PDF magazine for FREE