In AEC the pace of change is accelerating. It took millennia to move from physical to digital drawings, then two decades to fully embrace BIM. Now, with AI, solver-driven design tools could radically reshape our industry in as little as five years, writes Martyn Day

As long-suffering readers will know, I spend a lot of time thinking about how BIM will change through the application of new technology and new workflows. It is the reason AEC Magazine’s NXT BLD conference exists, to explore how we can advance workflows and drive the creation of new technology from developers. That said, most industry change feels gradual, sometimes too slow but occasionally, something feels discontinuous.

In the world of BIM, there’s a technology race underway, with six or more startups working to develop the next generation of tools, aiming to challenge dominant desktop, file-based applications such as Revit and Archicad. Considering the years of knowledge embedded in these platforms, this is a formidable task.

Yet this challenge is balanced by the limitations of existing tools: constraints in collaboration, performance problems with large models, and frustration among mature customers seeking meaningful productivity gains rather than slow, incremental improvements.

Discover what’s new in technology for architecture, engineering and construction — read the latest edition of AEC Magazine

👉 Subscribe FREE here

The new wave of startups aren’t just rebuilding Revit with better collaboration. As the industry looks to adopt modern BIM applications, it has also begun to ask a more fundamental question: what should a next-generation BIM tool look like in an AI-enabled world?

One emerging answer is a vision of BIM like something resembling an operating system for the built environment. This isn’t a marketing-friendly vision of a unified platform, but the technical reality of a structured data layer sitting between human intent and machine execution. To my mind, the critical question is whether this represents genuine progress or simply the digitisation of institutional constraints?

The myth of neutrality

The “operating system” metaphor would suggest a level of neutrality that BIM has never possessed. In computing, an OS provides a standard abstraction layer for developers. BIM, by contrast, is shaped by the commercial moats of a few dominant vendors. These proprietary schemas are not just technical architectures; they are financial and strategic assets. While Industry Foundation Classes (IFC) is often touted as a universal solvent, the reality is that round tripping still results in significant fidelity loss. Without a genuinely open, vendor-agnostic data layer, “BIM-as-OS” is merely “BIM-as-walled-garden.” The commercial incentives of major vendors remain fundamentally unaligned with the industry’s desperate need for seamless data liquidity.

As venture capital pours into the AEC space to drive these developments, another technology, AI, and specifically AI agents, has become all the rage. We are only just starting to understand its potential to fundamentally upset the very process of design. Only two years ago such levels of automation and the application of deep industry knowledge would have sounded like science fiction. The expected rise of agentic systems highlights the fragility of these walled gardens even more acutely.

AI agents

Most people’s experience of AI today is conversational. You ask a question, you receive an answer. ChatGPT and similar systems are reactive. They respond to prompts. They generate text, images or code, but they do not act unless instructed. They have no persistence, short memories of a live project beyond the current session, and no mandate to pursue outcomes independently.

Agentic AI systems are different. In AEC, there will be live AI tools that can read, interpret, and modify building data, in all its formats and silos. If BIM is to be the runtime where agents create geometry, test compliance, generate the MEP and structural elements, the substrate must be stable and accessible. Humans define the parameters (mass, design, regulations etc.) but machines perform the heavy lifting of solving the building’s systems, with multiple specialist AI agents working together to reach a solution. This assumes a level of cross-platform reliability that today’s fragmented ecosystem cannot easily deliver.

An agentic BIM solution is not about replacing designers. It is about replacing the decaying computer science of the 90s with truly computable substrates

Incumbent vendors face a structural bind. They will need to reach an agentic future, but they cannot get there without carrying their existing users with them. That forces the creation of transitional platforms designed to lift legacy data into cloud environments capable of supporting machine execution. Autodesk’s investment in Forge (now APS – Autodesk Platform Services) and Forma, for example, can be seen as an attempt to re-home Revit’s data gravity in anticipation of that shift.

The risk is that this slow migration collides with a faster external threat. Startups, unburdened by legacy codebases and empowered by increasingly capable foundation models, can emerge almost overnight as credible alternatives. These will not be complete authoring tools but will start encoding industry specialisms.

Engineering automation

The evidence that this shift is already underway isn’t theoretical, it’s visible in specific engineering disciplines that have quietly crossed a threshold. Over the past year I have seen three very ordinary engineering problems solved in ways that feel quietly destabilising. There are no cinematic dashboards, no breathless generative imagery, no marketing theatre – just systems that ingest constraints and return coordinated, buildable outcomes faster than any human team reasonably could.

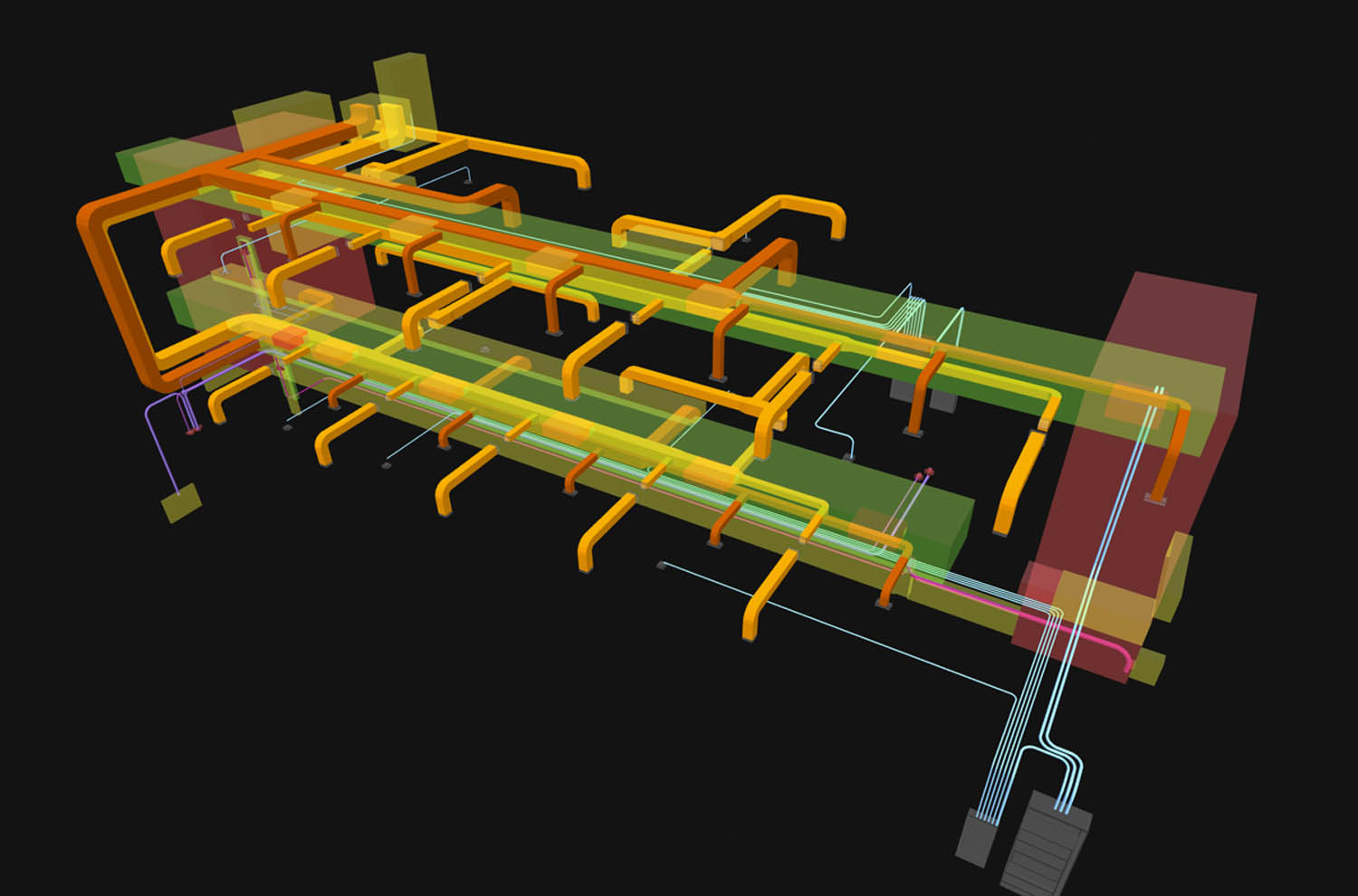

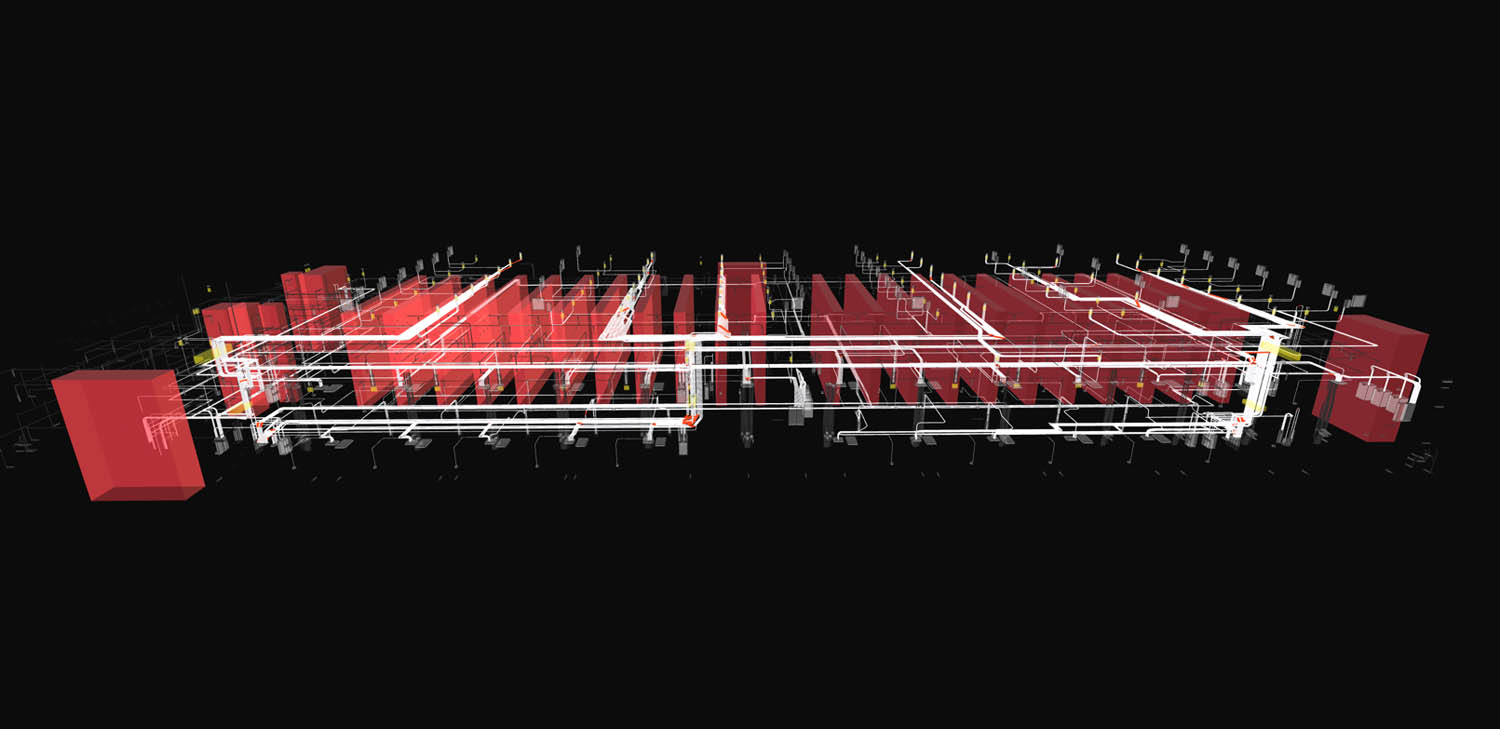

Augmenta showed how its software can automatically wire 25 miles of electrical containments across an entire data centre design, overnight. The Toronto-based startup also gave a sneak peek of its MEP solution, currently in alpha, which can generate a 3D MEP response to a building design, placing all the piping and fixtures. Shifting disciplines, Branch3D showed a structural scheme which continuously recalculates as materials change and geometry shifts position – all in real time.

In all three cases, these were not visualisations. They were discipline-complete solves. The uncomfortable realisation was not that automation is improving, it was that some disciplines have already crossed from assistance into resolution.

Out of all the generative and AI-based tools which have come out in the last two years, I would say that engineering, particularly electrical and structure, are at the point of tipping into one-pass solvability for a range of standard construction methods. Architecture has not – at least not yet. And that asymmetry matters.

Architecture asymmetry

There is a quieter implication here that most people are not yet comfortable talking about. Architecture will not tip in the same way, at least not at the same speed. Aesthetics, spatial judgement, and experiential quality do not reduce neatly to constraint satisfaction, and while plausible massing and layouts can be generated, taste is not a solvable optimisation problem, except in highly templated building types such as mass housing or standardised commercial developments.

However sophisticated generative tools become, architectural design still contains an irreducible element of human authorship, cultural context, and subjective judgement that resists formalisation in the way that load paths, cable routes, or pressure drops do.

That creates a growing asymmetry. Engineering disciplines are moving toward one-pass computational solves, while architecture remains anchored in slower, more artisanal production cycles. It becomes increasingly strange to imagine a future in which an architectural firm spends twelve to eighteen months developing a building, only for the complete MEP and structural systems to be generated overnight from that scheme. The imbalance is not just temporal. It is organisational and economic. One side of the multidisciplinary team is moving into a world of near-instant viability feedback and solver-driven iteration, while the other remains bound to long design cycles, staged approvals, and human-centred exploration.

However, the assumption that architectural ideation is entirely insulated from this automation is beginning to show cracks. While the subjective core of design remains stubbornly human, the manual scaffolding around that core is being quietly eroded. At the front end of architectural ideation itself, something slightly uncomfortable is beginning to happen. AI rendering tools, which many architects have already embraced as a way of accelerating visualisation and client communication, are starting to drift beyond the production of two-dimensional images and into the territory of spatial inference and geometry generation.

Products such as Google’s Gemini image models, often referred to by the community under the codename “Nano Banana”, can now take rough sketches, photographs, or textual prompts and generate coherent visual outputs that resemble plans, sections, elevations, and volumetric studies. These systems are not producing rule-compliant BIM models or construction documentation, and they still get basic things wrong, but they are beginning to behave as if they understand architectural drawings and spatial conventions rather than merely rendering pretty pictures.

What makes that development interesting is not its current quality, which remains uneven, but its direction of travel. If an AI model can take a street-view image, a hand sketch, or a conceptual prompt and generate a plausible plan-like or section-like representation, then the distance between concept and structured output starts to shrink. What began as AI-assisted rendering creeps into the territory traditionally occupied by early modelling. Even if these tools never become reliable enough to generate production geometry directly, they already compress the conceptual phase of design by allowing architects to iterate spatial ideas far faster than manual sketching or early-stage BIM ever allowed.

In that sense, architecture is not as insulated from automation as it first might appear. The subjective part of design is still there, but the tempo at which ideas can be externalised, visualised, and mutated is accelerating. That does not collapse architectural authorship in the way solver-driven engineering collapses technical production, but it does erode one of the asymmetry’s anchors, namely the assumption that architectural ideation must remain slow, manual, and human-bounded. The front end of design is becoming more machine-mediated even if the judgement layer retains a distinctly human touch.

In a solver-driven environment, engineering is not a downstream check. It sits inside the act of designing itself. When a wall shifts, the structural logic shifts with it. When spans extend, loads recalculate. The response is not issued days later in a coordination meeting. It happens as the model evolves

That asymmetry quietly attacks one of the deepest pieces of institutional infrastructure in the profession: stage-based design. RIBA stages, and their equivalents in other countries, assume that design progresses in discrete, sequential blocks.

Concept design finishes, developed design begins. Structural data is fed back into the design at defined points. Design freezes occur. Packages are handed off. Those assumptions only make sense in a world where feedback is slow and coordination is fundamentally manual. Once structural and MEP viability can be recomputed in minutes or hours, and once architectural concepts themselves can be externalised and iterated at machine speed, the idea that a project meaningfully transitions from one stage to another starts to break down.

Intent versus viability

The traditional BIM workflow separates authorship from analysis. Designers create geometry, engineers rationalise it, and coordination attempts to reconcile both. Much of this process is iterative and human driven. Intent is expressed visually and spatially. Viability is checked after the fact.

However, these new solver-driven systems could invert that sequence. Instead of modelling first and checking later, they encode constraints upfront and generate only viable outcomes. Codes, spatial logic, performance thresholds, cost parameters, and system relationships become the inputs. Geometry becomes the output.

This does not eliminate design, but it shifts the focus of design effort from drawing to declaring intent. Humans define objectives, trade-offs, aesthetics, and qualitative judgement, while the machines explore permutations and enforce constraint satisfaction. This loop changes the workflow from model to analysis to revision, to specify intent to generate to evaluate and then converge. This shift may seem subtle in description, but it has radical implications to current workflows and design timelines.

Production time

What happens when electrical containment can be generated in hours rather than weeks? What happens when structural framing can re-solve continuously as geometry changes? What happens when drawings are extracted automatically from coordinated models that were never manually drafted in the first place?

The historic use of BIM as the core modelling task, the artisanal act of constructing project geometry object by object, begins to be hollowed out. In many ways, we are on the cusp of exiting the LEGO phase of BIM.

Zooming out, advances in scan-to-BIM removes manual capture. AI-assisted 2D-to-3D translation removes redrawing. Simulation engines automate performance testing. Clash detection and QA are live services running on the project 24/7. Autodrawings services produce your drawing sets. Discipline generators collapse coordination loops. Digital twins and intelligent point cloud vision systems automate asset reconciliation. Over time, piece by piece, the manual layers of BIM production erode. What remains is orchestration.

Engineers increasingly supervise solvers rather than construct geometry. They test scenarios, adjust constraints, and interrogate outputs. The real human value-add shifts from producing the model to defining the conditions under which the model should exist. If this continues, billing structures based on hours of production become defunct. When an overnight solve replaces weeks of coordinated labour, fee logic changes whether firms are ready or not – something we discuss in more detail later on.

Geometry and agents

For most of its history, BIM has been treated as a modelling problem, to produce drawings. The industry concentrated on making geometry easier to author, edit, and document, while intelligence, when it appeared, was layered on top through metadata, parameters, and scripting, and this was fine. These improvements increased productivity, but they did not alter the foundational computer science assumption that geometry of a design is authored first and reasoned about later.

As software moves towards agentic systems capable of operating autonomously, the limits of that assumption become clear. BIM’s representation of geometry and intent was never designed to be reasoned over by machines. All today’s BIM systems are there to create drawings.

Revit resolves geometry directly as boundary artefacts. Once created, elements exist as objects with parameters acting upon them in place. There is no reconstructable construction history, and no first principles re-solve mechanism. Designers carry intent in their brains, very much external to the software. Today, the model exposes outcome, not the rationale of the design. For humans, this is workable, for today’s AI, it is opaque.

Revit is parametric, change the height of a wall and the wall updates. Adjust a level and hosted elements follow. Parameters drive behaviour and maintain relationships. But those parameters operate locally. At a local level, while a wall object understands its own height and constraints, the intelligence is embedded inside individual objects and their immediate relationships. The model, as a whole, is not governed by declared rules, like building code.

In a declarative environment, corridors would maintain a minimum width. The structure must satisfy specified load criteria. The designer declares intent, and the system resolves the geometry required to satisfy it.

BIM systems on the market today don’t operate at that level. They update objects when you modify them, preserving relationships the designer has explicitly defined. But they don’t reason globally, in response to high-level goals. This makes them procedural and element-centric, not design intent-driven.

As solver-driven workflows and agent-based systems emerge, the difference between editing geometry and computing outcomes becomes fundamental. Today’s BIM platforms are not the right architecture for what’s coming next. But the AEC industry is not the only CAD to run out of road in an AI future.

Beyond AEC

Jumping into another sector, mechanical CAD (MCAD) software developers at least attempted to encode constructive logic through feature trees and geometry kernels. As the user models and applies surfaces and features such as chamfers, rounds, and fillets, the computer builds the recipes of the functions that were called to model it. But even there, there are limitations. Blake Courter, long-time geometry and kernel specialist and co-founder of SpaceClaim, argues that these feature trees capture construction sequence rather than actual design intent. Boundary representation kernels themselves are opaque and fragile. Boolean operations fail at edge cases. The geometry engine becomes something you negotiate with rather than reason through.

AEC never confronted this kernel problem, mainly because it never had one! For instance, Revit is a composition system, not a computational geometry engine. It assembles pre-defined objects whose primary purpose is ultimately documentation. Intelligence lives outside the model, in professional judgement and office standards.

That approach made sense when BIM functioned primarily as a structured container for information and drawings. It is far less adequate if BIM is expected to operate as an execution runtime, resolving intent, constraints and performance criteria in real time. We may need to have a technology upgrade that is way deeper than a new BIM 2.0 platform that repeats the same level of abstraction with the same 2D deliverable.

Kernel refresh

Courter proposes an alternative for MCAD, which he describes as geometry as code, replacing stored boundary shape with executable description. In this model, geometry is a function of design intent. Boolean union becomes arithmetic. Intersection becomes a max operation. Offsets are evaluated rather than solved. The representation is implicit and continuous.

Combining shapes is no longer about stitching surfaces together and hoping nothing fails. It is more like combining numbers in a formula. Instead of cutting and merging faces, the system simply recalculates the shape from a set of rules. If two objects overlap, the software resolves the result mathematically rather than trying to rebuild their surfaces with all the complexity and possibility of falling over.

Similarly, creating an offset is not a delicate operation that generates new faces and edges. It just adjusts a value in the underlying description and lets the geometry update automatically. The practical difference is robustness. The model is not constantly repairing boundaries. It is recalculating from intent.

For architects, the simplest way to think about it is this: instead of storing a fragile 3D object and modifying it piece by piece, the software stores the recipe. When you change an ingredient, it regenerates the form cleanly. For MCAD and kernel technology, that is the conceptual shift.

While the subjective core of architectural design remains stubbornly human, the manual scaffolding around that core is being quietly eroded

For machine learning, this is native territory. An implicit field is a classifier. Inside and outside are functions, not stitched surfaces. AI models can write, adjust, and reason over geometry expressed this way far more readily than over boundary artefacts mediated by opaque kernels.

But what does this have to do with AEC, which mainly doesn’t even use solid modelling kernels? Courter’s thinking has real implications if applied to a new BIM tool.

In Revit, a floor plate is a stored surface. If the grid moves, someone redraws it. In a geometry-as-code system, the floor plate is a function of site boundary, grid, setbacks, and target area. Change the grid and the floor re-derives automatically. An agent can explore thousands of grid variations because there is no geometry to patch, only rules to re-evaluate. This would mark the transition from BIM as a container to BIM as a substrate.

Revit and forma

Autodesk understands the pressure of product development, possible threats and remaining the dominant global BIM player. Because Revit is mature it remains an extraordinarily profitable product, deeply embedd ed and heavily relied upon. Its deep core, however, is effectively frozen. Development has increasingly moved to outer layers, cloud services, automation, and platform adjacencies. By all accounts, getting slanted and tapered walls took 20 years and required a deep dive.

Autodesk’s response has been to build around Revit rather than rebuild it. Forma is the clearest signal of where the company believes future intelligence will live. Data re-homing into cloud-native environments is not cosmetic. AI and AI agents require access to structured, centralised data and scalable compute. A desktop-bound artefact file cannot host that future.

Autodesk’s leadership is clear-eyed about where this is heading. CEO Andrew Anagnost describes the shift as moving toward “a system that understands intent, requirements, and outcomes” rather than discrete tools, with design factors “explored in parallel rather than in sequence.” This vision of continuous feedback across form, function, and constructability aligns closely with the solver-driven paradigm emerging across the industry. In Anagnost’s framing, agents “increasingly act on behalf of owners and teams, continuously tracking requirements and helping keep decisions aligned as conditions change.”

The new risk for Autodesk, and for others, is foundational. Revit is optimised for author-driven modelling and deterministic documentation. It was not designed to host continuously regenerating autonomous systems. Intelligence is therefore migrating around it. Revit becomes the publication layer rather than the design decision engine. That is not a roadmap issue. It is about how 1990s computer science codified the representation of a building for that generation of software tools.

Is BIM 2.0 too late?

There is a further, slightly uncomfortable layer to all of this that has implications not only for industry leader Autodesk, but also for the new generation of “BIM 2.0” tools that are trying to replace Revit outright. Products such as Snaptrude, Qonic, Arcol, Hypar, Autodesk Forma, NeoBIM, and Motif were largely conceived before the current wave of generative AI, large language models (LLMs), and agentic tooling reshaped what software can plausibly do. Their original thesis was straightforward and, at the time, entirely reasonable: Revit is a twenty-five-year-old product with a frozen core and an increasingly fragile relationship to modern operating systems, graphics stacks, and cloud workflows, so the industry needs a modern, cloud-native, collaborative modeller to take its place.

In isolation, that thesis still makes sense. A cleaner, faster, more interoperable modelling environment is an obvious improvement. The problem is that the ground underneath that improvement is now moving. If BIM is drifting from being a hand authored artefact to being the computed outcome of solver driven and agent orchestrated processes, then replacing one manual modeller with another manual modeller starts to look like a strangely conservative ambition. It solves real problems around collaboration, performance, and interoperability, but it leaves the deeper production logic of the industry untouched. In a world where engineering systems are being generated in one pass from intent and constraints, and where agents are beginning to externalise large parts of coordination, documentation, and compliance, a better place to draw walls and place ducts may already be a secondary concern.

This puts the BIM 2.0 cohort in an awkward strategic position. The uncomfortable possibility is that a generation of well-funded, well-built, cloud-native BIM tools is arriving just as the industry’s centre of gravity shifts away from manual modelling altogether. If they remain primarily modelling tools, even very good ones, they risk being leapfrogged by tech stacks that treat modelling as a side effect rather than a core activity. In an agentic, solver-driven world, geometry is not something you painstakingly construct, it is something that falls out of a viability computation. The modeller becomes a viewport onto a continuously regenerated state, not the engine that produces it. With all this in mind, BIM 2.0 startups face the same strategic dilemma as Autodesk, just without Autodesk’s massive installed base and cash flow.

Some of these platforms are already pivoting in that direction. Hypar has always framed itself more as a generative system than as a traditional modeller. Forma is being positioned by Autodesk as a host environment for analysis, optimisation, and early-stage automation rather than a Revit clone. Others are experimenting with rule-based design, parametrics, and lightweight scripting. These moves are no longer optional extensions; they are existential pivots.

Seen in that light, the real competition for these companies may not be Autodesk at all. It may be the emergent stack of discipline solvers, internal agents, data lake centric workflows, and application on demand tooling that treats modelling as an implementation detail. In that world, a BIM 2.0 tool that does not become deeply agentic, solver aware, and execution centric risks being stranded in a shrinking middle ground: too modern to be a legacy cash cow, and too manual to be a future runtime.

The more radical unbundling of BIM now in progress is not from desktop to cloud, but from manual production to computational execution. In that transition, the question is no longer who builds the best modeller. It is who owns the runtime in which buildings get computed, validated, and continuously renegotiated against cost, carbon, compliance, and constructability.

To remain relevant over a ten-to-fifteen-year horizon, these platforms must reposition themselves not as better modellers, but as substrates for agentic and solver-driven design. That implies opening themselves to external orchestration, embedding generation engines deeply into their cores, treating BIM data as a live runtime rather than a file or document, and accepting that a growing fraction of their geometry will not be authored by humans at all.

Data sovereignty and paranoia

As BIM platforms move from authored artefact to execution substrate, control over data becomes absolutely strategic. It’s all about the data.

Brett Goodchild, senior product manager for building design at US retail giant Target, frames the stakes clearly: “AI is unavoidable in AEC, but the industry’s future won’t be settled by shinier versions of existing tools. The wake-up moment we are edging towards is enterprise data sovereignty. If we view AI as merely a way to build better tools faster, we’ve learned nothing from the friction points that have been exposed by BIM, structural and contractual.

“The disruption from AI isn’t the software; it’s the shift toward project autonomy, not in a generative design sense, but in a systemic sense where a project is driven by the owner owning and controlling their data without vendor interference and being driven by the owner’s internal requirements.”

This is the fundamental tension. The software vendors want customer model data in their clouds, where agents can operate at scale. Meanwhile, many firms want their data in their own lakes, where internal agents can be trained and deployed, away from the eyes of vendors and not shared with the hoi polloi.

There is mutual paranoia. Vendors fear AEC firms will learn their platform logic and bypass customer feature moats by creating their own applications on demand. AEC firms fear vendors learning proprietary production logic and internal workflows from hosted data.

The economic reality of this shift is beginning to crystallise, and it exposes a fundamental tension. Autodesk is developing MCP (Model Context Protocol) connectors to its Autodesk Platform Services infrastructure, enabling agentic AI systems to access design data through their APIs. This is forward looking and positions APS as the runtime for agentic workflows. However, the system will be tokenised, meaning every interaction with the data will be metered and charged.

If BIM is drifting from being a hand authored artefact to being the computed outcome of solver driven and agent orchestrated processes, then replacing one manual modeller with another manual modeller starts to look like a strangely conservative ambition

This creates a compounding cost structure that firms are only just beginning to understand. They already pay for Autodesk product subscriptions. They already pay for cloud collaboration services like Autodesk Construction Cloud (ACC) and SaaS platforms that combine hosting with capabilities. Now they will pay again, on a per-access basis – every time an AI agent queries their own project data through MCPs (Model Context Protocls) attached to APS. And then they will pay a fourth time when the LLM provider processes that data. In testing agentic systems, I have personally already burnt through significant token volumes, and that is just experimentation. When agents are running continuous feedback loops across MEP, structure, compliance checking, and coordination, token consumption at production scale becomes a serious cost centre.

The incentive structure this creates is perverse. Firms may conclude that extracting their data from vendor clouds and hosting it in their own data lakes while using their own compute resources to run queries without per-touch metering, is more economical than paying a gatekeeper tax every time an agent needs to read a wall height or check a pipe diameter. Professional data hosting services contracted for federated project work, or internal infrastructure where firms control the LLM stack, start to look significantly more attractive than vendor-metered cloud services.

Autodesk is not alone in facing this dilemma. Any platform vendor moving to an agentic future must decide whether to charge for access to data that customers already own and are already paying to store. The risk is that aggressive metering accelerates the very thing vendors fear most: customers taking their data elsewhere and running their own applications on it.

Code on demand

AI is now enabling anyone to create applications on demand, by doing the coding for you. This will break SaaS bundling assumptions. The starting gun was Anthropic’s legal skill release in February this year, which sank the whole software application market. Wall Street started to realise that the AI firms were going to step on the feet of traditional value add software companies, as they absorb vertical skill sets.

For the design world, the implications became viscerally real when Google dropped Gemini 3 Deep Think. The demonstration was quietly destabilising. It turned a 2D sketch with text prompt into a 3D printable STL model. This collapses a whole standard product design workflow – ideation, modelling, analysis to export – into one conversational pipeline. In generating the output, a complex procedural gyroid lattice solid, the system calculated wall thickness and lattice density to support a 3kg load without buckling, while allowing airflow for cooling. It renders it with HDRi quality. Deep Think analyses the drawing, builds the complex shape, and generates a file so you can create the physical object with 3D printing. Courter has already applied to Google to access the API.

When workflow intelligence becomes core to external LLMs and composable, platform stickiness appears to weaken. But there is a sobering counterpoint to the “applications on demand” optimism.

John Egan, CEO of BIM Launcher, a specialist in Common Data Environment (CDE) workflows and AEC platforms, raises a practical concern about maintainability: “The challenge with any AI written code is in its maintainability. Your developers won’t really understand what is in there and what happens if something mission critical breaks? Let’s say you replace five jobs with one person and a vibe coded app. If that breaks, there is now one person with five jobs to cover and a bundle of code that no one understands.”

Egan’s point cuts to the difference between generating code and maintaining systems. “What people don’t understand is that the investment in codebases is in the refinement. Like an essay, you start with a draft and refine it so that it has the right abstractions and that it is maintainable for when it does break or needs to be extended.” Moreover, professional software development involves far more than functional code. Substantial investment goes into user experience design, interface refinement, and quality assurance, none of which AI-generated prototypes inherently provide. A working demo is not a production system.

This suggests that while AI may democratise initial development, it does not eliminate the need for software engineering discipline, design expertise, or rigorous testing. The practices around AI-assisted development, not the AI itself, may be where the real innovation needs to happen.

This tension, between the promise of rapid application generation and the reality of long-term system maintenance, remains unresolved. Geometry definition may cease to be the moat, but operational reliability, user experience, and maintainability become new strategic concerns. Data and orchestration become the battleground.

Automation = billing collapse

As solver-driven workflows mature, the rhythm of production is set to change. If MEP and structural systems can re-solve in near real time, architecture can no longer proceed as a largely isolated first act, followed by downstream correction. The traditional pattern, design, then coordinate, then revise, begins to collapse. Instead of sequential exchanges between disciplines, feedback becomes continuous.

In a solver-driven environment, engineering is not a downstream check. It sits inside the act of designing itself. When a wall shifts, the structural logic shifts with it. When spans extend, loads recalculate. The response is not issued days later in a coordination meeting. It happens as the model evolves.

Iteration ceases to be a hand-off between disciplines. It becomes simultaneous. Architecture and engineering occupy the same moment rather than adjacent ones, and that has economic consequences.

The industry’s fee structures evolved around time and sequence. One discipline completes a package, passes it on, and bills accordingly. But if coordination is embedded and iteration costs approach zero, the logic of billing for hours spent adjusting drawings becomes harder to defend.

When machines absorb production, value shifts to constraint definition, orchestration, and outcome responsibility. Firms that continue to measure themselves primarily by production throughput may discover that the production layer is precisely the part most vulnerable to automation.

Liability, audit and trust

It’s early days. AEC firms are still assessing what this means for their practices. But as with much technology in this space, the pace of adoption will be shaped less by technical capability than by legal workflows and frameworks . Autonomous generation raises obvious questions. Who is responsible for an AI generated design that later fails? How is intent audited? How are decisions traced?

Software vendors understandably want economic upside without legal exposure. Firms want productivity without unmanageable risk. Regulatory frameworks will need to catch up. Audit trails, machine-readable provenance, and explainable generation become essential.

This transition may follow a pattern familiar to the adoption of autonomous driving. First comes augmentation (copilot). The system assists, suggests, optimises, but a human remains firmly in control. Then comes generation, where the machine begins to produce viable outcomes with limited intervention. Finally, normalisation arrives, once regulation, liability frameworks and professional practice have adjusted to accommodate the shift. This technology does not leap straight to autonomy. It moves through stages, each one reshaping expectations and redistributing responsibility.

May Winfield, global director of commercial, legal and digital risks at Buro Happold, frames this more as transformation than replacement: “AI augments and enhances and will require adaptation of jobs or side steps to complimentary roles, enabling more analysis or thinking or creative time, including for roles like lawyers and consultants, rather than AI replacing humans.” Her view is that risk mitigation requires sustained human involvement: “The risks and issues of AI are not ones that will be fixed overnight, and some will always remain due to the nature of AI. Humans must remain in the loop.”

This perspective aligns with the phased transition model. Augmentation does not inevitably progress to full autonomy. In domains where liability and professional responsibility are non-negotiable, the loop may never fully close. The question is not whether humans stay involved, but where in the workflow their judgement becomes essential rather than routine.

The old frameworks of planning authorities, building control, and trades on-site still rely on the 2D drawing as the legal instrument of record. This isn’t just a technical lag, it’s institutional inertia. I don’t see this changing quickly, but I am sure that AI agents will serve to automate and take the drudgery out of the process.

Regulatory bodies and insurers require the finality a drawing set provides. Moving to “model-as-authority” means a coordinated shift across all the usual stakeholders who have no incentive to move. Until the legal and regulatory “last mile” is bridged, BIM as an end-to-end live execution substrate will remain a theoretical exercise.

The agentic timeline

At the current rate of development, I would stick my neck out and predict that from 2026 to 2030, augmentation dominates. AI assists, suggests, and accelerates. AEC firms start developing their own agents and applying them to their own projects. From 2028 to 2035, discipline-level smart generation becomes mainstream in engineering-heavy domains. By 2035 to 2045, agentic BIM would have become normalised under new regulatory and economic structures.

Architecture will lag engineering. The architect’s key traits, dealing with clients, spatial intent and aesthetic judgement, are much harder to formalise than duct routing or load paths. But the economic pressure from solver-driven engineering will be impossible to ignore. The core concern is that bread and butter buildings, simple structures and commonly designed building types, will face increasing automation across all firms, even signature architects. Combined with kit of parts construction, the opportunity for design build will never have looked so good.

Conclusion

This feels like a starting-pistol moment for the industry, not because everything is about to change overnight, but because the direction of travel has become hard to ignore. The early evidence is already here. Discipline solvers are producing viable engineering systems in one pass. Agents are beginning to stitch together work that used to require armies of coordinators, technicians, and spreadsheet glue. Visual tools that began as rendering accelerants are drifting toward spatial inference and structured output. Taken individually, each of these looks like another productivity story. Taken together, they look like the beginnings of a different production stack.

Ultimately, we may be witnessing the succession of BIM rather than its evolution. For three decades, BIM has revolved around authored objects: walls, doors, slabs, assemblies. These are intelligent in a limited, local sense, but they remain static dumb artefacts. They are edited, coordinated and documented. They are not continuously recomputed against a multitude of competing objectives, all watched over by machines of loving grace. Legacy platforms cannot address the fundamental architecture problem by adding AI features alone.

A true execution substrate likely requires an entirely new architecture – an open, cloud-native database that prioritises machine readability and geometry as code over the traditional authoring interface. We will most certainly see a move toward environments where the model is a live (alive!) version-controlled repository of state, accessible via robust APIs rather than files from proprietary desktop software.

I suspect we will also retain the BIM label for comfort, but the honest framing is that in an agentic world, we will need to build something new. The value of this next generation won’t lie in modelling speed, but in its ability to coordinate machine intelligence across a building’s lifecycle. We are currently watching our old tools strain under the weight of a future they weren’t built to carry.

An agentic BIM solution is not about replacing designers. It is about replacing the decaying computer science of the 90s with truly computable substrates. In an agentic model humans define intent: spatial quality, performance targets, budget constraints, carbon limits, constructability thresholds. Machines take those declarations and iterate across them. They test options, optimise tradeoffs and converge towards viable solutions across structure, MEP, cost and compliance. The designer remains responsible for judgement. The machine absorbs the combinatorial burden.

The shift only becomes possible once geometry is executable and intent is declarative. When geometry can be recomputed from rules rather than having to be manually adjusted, and when goals are expressed as constraints rather than embedded in drawings, BIM ceases to be something agents work around. It becomes the environment they operate within.

The danger for AEC practices and firms is not that they fail to buy the right new tool next year. It is that they spend the next decade doing what they have always done: optimising, standardising, and hardening workflows around a model of work that is quietly losing its economic and technical foundations. There is an understandable instinct, especially in risk-averse environments, to treat the current BIM stack as the end game. To refine templates, add more standards, tighten QA, build more libraries, script more routines, and lock down more process. That work is not wasted in the short term, because projects still have to get delivered, and the contractual world still runs on drawings, packages, and stage gates. However, over a long enough horizon, there is a real possibility that the industry ends up perfecting the wrong thing with the legacy applications wagging the dog.

This raises a fundamental question, not whether this transition occurs, because it’s coming to a cloud near you, but where will this thinking take root?

Will the computational substrate emerge gradually from within today’s platforms, layered onto existing geometry engines and file structures? Or will it be built from first principles by those who conclude that legacy representations, however successful historically, cannot carry the computational load of continuous, multi-domain optimisation? This decision, more than any individual feature release or AI demonstration, will shape the next twenty years of AEC software.

Today’s manual BIM is not about to vanish overnight. But it is no longer a credible destination.

NXT BLD 2026

If you find this topic fascinating, please consider coming to AEC Magazine’s NXT BLD event in London – 13 / 14 May. All the leading AEC AI software developers will be there, including Augmenta, Endra, HVAKR, Finch3D, and there will be many presentations on research of what’s coming next.