High-end workstation GPU not only features expected generational improvements, but promises to boost performance further by changing the way viewports and scenes are rendered.

Nvidia has launched the Nvidia RTX 6000, a high-end workstation GPU built on the company’s new Ada Lovelace architecture, named after the English Mathematician credited with being the first computer programmer.

The Nvidia RTX 6000 GPU is said to deliver up to two to four times the performance of the previous generation ‘Ampere’ Nvidia RTX A6000 and promises to deliver big advances in real-time rendering, graphics, AI and compute, including engineering simulation. It is not to be confused with 2018’s Turing-based Nvidia Quadro RTX 6000.

The Nvidia RTX 6000 is a dual slot graphics card with 48 GB of GDDR6 memory (with error-correcting code (ECC)), a max power consumption of 300 W and support for PCIe Gen 4, giving it full compatibility with workstations featuring the latest Intel and AMD CPUs. It supports Nvidia virtual GPU (vGPU) software for multiple high-performance virtual workstation instances and boasts 3x the video encoding performance of the Nvidia RTX A6000, for streaming multiple simultaneous XR sessions using Nvidia CloudXR.

The Nvidia RTX 6000 offers all the generational improvements you’d expect from a new GPU architecture – third-gen RT Cores for ray tracing, fourth-gen Tensor Cores for AI compute, and next-gen CUDA cores for graphics and simulation – but there are also significant changes in the way the ‘Ada Lovelace’ GPU carries out calculations to increase performance in viz-centric workflows.

Deep Learning Super Sampling 3 (DLSS) and Shader Execution Reordering (SER) are the two technologies that stand out.

Deep Learning Super Sampling 3 (DLSS)

Nvidia DLSS has been around for several years and with the new ‘Ada Lovelace’ Nvidia RTX 6000, is now on its third generation. DLSS uses deep learning-based upscaling techniques where frames are rendered at a lower resolution and the GPU’s ‘AI’ Tensor cores are then used to predict what a high-res frame would look like.

With Nvidia’s previous generation ‘Ampere’ GPUs, DLSS 2 took a low-resolution current frame and the high-resolution previous frame to predict, on a pixel-by-pixel basis, what a high-resolution current frame would look like.

With DLSS 3, the AI generates entirely new frames rather than just pixels. It processes the new frame, and the prior frame, to discover how the scene is changing, then generates entirely new frames without having to process the graphics pipeline. According to Nvidia CEO Jensen Huang, this approach means DLSS 3 will benefit both GPU and CPU limited games.

Huang made no reference to how DLSS 3 might benefit professional 3D applications. However, while DLSS 2 was used mainly in GPU limited viz applications such as Enscape and Autodesk VRED, we wonder if DLSS 3 could deliver big performance improvements for 3D CAD, which tends to be CPU limited.

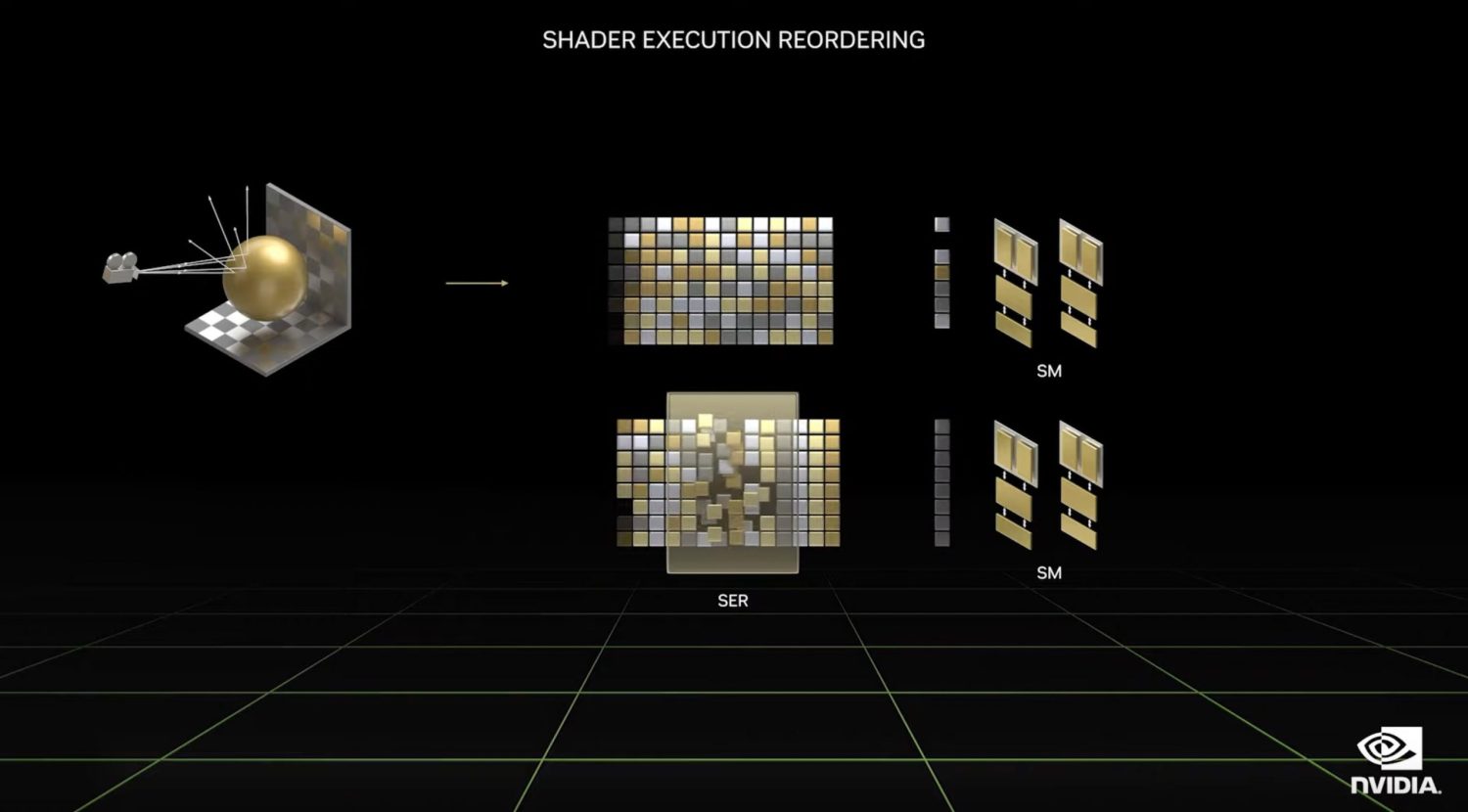

Shader Execution Reordering (SER)

Nvidia explains that GPUs are most efficient when processing similar work at the same time. However, with ray tracing, rays bounce in different directions and intersect surfaces of various types. According to Huang, this can lead to different threads processing different shaders or accessing memory that is hard to coalesce or cache.

With Shader Execution Reordering (SER), the Nvidia RTX 6000 dynamically reorganises its workload, so similar shaders are processed together. According to Nvidia, SER can give a two to three times speed up for ray tracing and a frame rate increase of up to 25%. Nvidia did not announce which software applications will take advantage of this technology.

Engineering simulation

For the launch of Nvidia RTX 6000, the focus was mainly on graphics-centric workflows. However, Nvidia also dedicated some time to engineering simulation, specifically the use of Ansys software, including Ansys Discovery and Ansys Fluent for Computational Fluid Dynamics (CFD).

“The new Nvidia Ada Lovelace architecture will enable designers and engineers to continue pushing the boundaries of engineering simulations,” said Dipankar Choudhury, Ansys fellow and HPC Centre of Excellence lead. “The RTX 6000 GPU’s larger L2 cache, significant increase in number and performance of next-gen cores and increased memory bandwidth will result in impressive performance gains for the broad Ansys application portfolio.”

Nvidia showed a car model set up for a wind tunnel analysis in Ansys Discovery to perform external aerodynamics simulation with flow inlets, pressure outlets, and wall boundary conditions. It showed how the Nvidia RTX 6000 can allow multiple design alternatives to be explored in real time, demonstrating that when the flow inlet velocity is changed, the results can be viewed instantly.

Nvidia also highlighted the benefits of having 48 GB of memory, explaining that with the Nvidia RTX 6000 users can increase the fidelity of the solver to perform more accurate simulations and still obtain the results in near real time.

The Nvidia RTX 6000 workstation GPU will be available from global distribution partners and manufacturers starting in December.