By putting workstations and BIM models in the data centre, AEC firms can transform collaborative workflows and run CAD software from anywhere. By Greg Corke

Having a personal workstation to run 3D CAD or BIM software used to be a given, but there is now a growing trend to centralise workstation resources with designers accessing them remotely with low powered clients.

Centralised workstations (and graphics servers) are usually mounted on racks inside a data centre. They can be located on premise, off premise, or even in the cloud and typically include one or two multi core CPUs, powerful GPUs, lots of memory and fast storage. Each machine is usually shared between multiple users, although they can be dedicated to individuals.

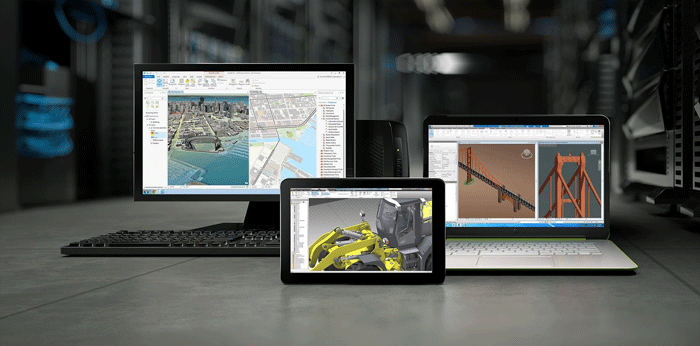

As the CAD or BIM software runs on servers inside the data centre (including the real time 3D graphics rendering), the client device does not need to be very powerful. It can also run pretty much any operating system, be that iOS, Android, OS X, Linux or Windows. This means users can access their CAD software with inexpensive PCs, laptops, zero clients, tablets, even smart phones — although handheld devices are best suited to viewing and markup and not precise CAD work (think fat fingers, small screens).

There are a number of different remote workstation technologies; most of which involve delivering rendered, encrypted pixels from the data centre to the client. These can be software-based (Citrix, VMware and Teradici ) or hardware-based (Teradici).

As no actual CAD or BIM data ever leaves the controlled environment of the data centre there can be huge data security benefits. Sensitive company data cannot fall into the wrong hands through misplaced or insecure laptops. And, as data is backed up live, rather than when the user returns to the office, it is not possible to lose data from catastrophic hardware failure.

Keeping data in a central data store, right next to the CAD software, can also offer massive benefits for workflow and collaboration.

Huge BIM models do not need to be transferred and synced between server and local workstation, which can take hours over Wide Area Network (WAN). Design review sessions for geographically dispersed teams can be started instantly.

As data remains in the data centre and is not ‘checked out’ to laptops or USB drives, version control can also be improved and syncing issues reduced.

With one central data centre serving several satellite offices data does not need to be replicated between sites. It is even possible to collaborate across continents, with architects, engineers and contractors all using the same data centre. In this case though, attribution of software and hardware costs needs to be considered.

Of course, network performance, particularly over WAN, is very important. This is governed by both latency (reaction time, measured in milliseconds) and bandwidth (the data rate, measured in Gigabits per second).

Host machines can be thousands of miles away, but the closer they are to the end user, the lower the latency and better the user experience. No one likes to feel a lag between moving the mouse and seeing the 3D CAD model rotate on screen.

IMSCAD, a BIM software virtualisation specialist, recommends anything under 70ms of ‘round trip’ latency for the best experience, but those as high as 150ms are workable. An MPLS (Multiprotocol Label Switching) private connection (which does not route through public channels) is often recommended when working between offices, though it can work on the open Internet, even 3G and 4G. WAN optimisation solutions, such as those offered by Riverbed, can also help.

Deploying centralised workstations can be a complex process and specialist consultants are usually recommended.

However, once the data centre is set up and optimised for CAD workflows the day-to-day management of workstations resources can be much easier than with distributed personal workstations.

IT administrators do not have to worry about maintaining individual machines spread across multiple sites. Service packs, fixes and upgrades can all be carried out in one place — inside the data centre, rather than scrambling about under desks. In addition, ultra low wattage zero clients that sit on desks require little to no maintenance and, as they are passively cooled, completely remove heat and noise from the design office.

New workstations can be spawned on demand, as and when projects dictate or workforces scale. There is no need to worry about the availability of local CAD-capable workstations. With zero clients on the desktop, should one fail, replace it with a new one in minutes, without even dropping the CAD session.

Workstations used to be the preserve of the hardcore CAD user as their high cost was hard to justify for part time users. As a result project managers and other senior staff usually made do with office PCs or laptops. Now they can be get access to a shared pool of high-end workstations on demand, as and when required.

With centralised workstations CAD users are no longer chained to their desks. Bandwidth and latency permitting, an architect can use the same high-performance CAD workstation from pretty much anywhere. This could mean tweaking live project data at a client’s office, accessing the very latest revisions on site, or working from home in the evening or weekend.

Workstation virtualisation

There are a number of ways centralised workstations can be deployed. In the most simple form, the designer has access to a dedicated machine that just happens to be in the data centre rather than under his or her desk.

This is usually called a ‘one-to-one connection’ but is also commonly refereed to as ‘remote workstation’ or ‘bare metal’ workstation. It is usually done with a 1U or 2U workstation, where ‘U’ refers the number of units the machine takes up in a server rack.

A one-to-one connection to a 1U or 2U workstation is a good solution for particularly demanding 3D applications — 3ds Max, for example — but it does not make the best use of rack space or resources if you want to deliver BIM applications to lots of users.

For AEC firms to really get the most out of centralised workstations, the workstation or server needs to be virtualised.

Virtualisation is where the workstation’s physical resources are broken down into a number of ‘logical’ resources, which are then used by individual users. The main players in this space are Citrix, VMware, Microsoft and Teradici, not counting the manufacturers of the workstations and servers themselves, of which there are many.

Virtual Desktop Infrastructure (VDI) is the most talked about virtualisation technology, and many see it as the future of CAD software delivery. Each user has access to his or her own virtual machine (VM) with its own desktop operating system and CAD applications.

As the entire desktop is hosted inside the data centre the client requirements can be very low. In fact, zero clients with little to no processing, storage and memory, and no host operating system are often used.

VDI offers AEC firms a great deal of flexibility in how they support the workforce. Hardware resources in VMs can be matched to different types of CAD users. For example, an entry-level Revit user who only models Revit families may need 8GB RAM, 2 CPU cores and entry-level graphics, whereas a power user who co-ordinates architecture, MEP and structural BIM models might need 24GB RAM, four CPU cores and mid range graphics.

VMs can be persistent. i.e. users can be assigned the same VM every time they log in. However, pooling VMs and assigning profiles to suit changing workflows often makes the best use of resources.

While deployment of CPU, memory and storage is relatively standard in a centralised workstation or server, things get a bit more complex when it comes to the GPU – the all-important processor that makes it possible to run 3D graphics-intensive applications in virtualised environments.

For VDI there are two main graphics delivery models: GPU pass through and virtual GPU (vGPU).

With GPU pass through, each GPU in the system is directly mapped to a VM. As each VM has a dedicated GPU it is considered to be the best performing virtualised solution for 3D CAD.

GPU pass through is traditionally handled by a number of add-in graphics cards — think Nvidia Quadro or AMD FirePro — the exact same cards you would find in a desktop workstation. A centralised workstation can typically host four of these CAD-optimised graphics cards.

Virtual GPU, on the other hand, is all about flexibility and maximising the density of 3D users on a workstation or server. Here, the GPU is ‘virtualised’ and dedicated resources assigned to multiple users.

Nvidia was first to market with vGPU and its GRID technology (and Nvidia GRID or Nvidia Tesla GPUs) has been available for a few years now. However, both AMD and Intel have recently announced their own vGPU technologies — AMD, Multiuser GPU and Intel Graphics Virtualization Technology-g (Intel GVT-g), which is being built into Intel CPUs.

The beauty of virtual GPU is that it allows firms to adapt to the changing needs of users and projects. On a single rack workstation one could, in theory, serve 32 entry-level CAD users at the start of a project and eight power users as it progresses, without having to change any hardware.

VDI for 3D CAD is a relatively new technology and while there is a lot of hype around it, there is still a place for application virtualisation in the AEC sector. IMSCAD, for example, still recommends it to some firms that simply want a remote access capability for AutoCAD and Revit.

With application virtualisation, rather than delivering a dedicated VM with a virtual desktop, applications are delivered to a desktop client on demand. In many cases firms keep their existing desktop workstations and run one or two applications virtualised to give them the benefits of centralised solution.

In terms of workstation hardware, one of the main differences between a VDI deployment and application virtualisation is how resources are assigned. With VDI, each user typically gets dedicated CPU, GPU and memory resources. With application virtualisation these are usually shared.

In theory this means users may not get guaranteed performance (if everyone tries to spin a 3D model at the same time, for example, it may slow down) but by pooling resources it does have the benefit of being able to serve a lot more users on the same workstation hardware. See the IMSCAD article for more on application virtualisation and VDI.

The cloud

Deploying virtualised workstations can mean a considerable capital expense. However, AEC firms can still get many of the benefits without having to invest in the technology upfront.

There are a number of services out there that use the cloud — public or private — to deliver virtual desktops or applications to end users over the Internet. Users typically ‘rent’ machines on a monthly basis.

Providers include FRAME, Cloudalize and Open Boundaries . Panzura also offers cloud-based VDI as an add-on to its core global file locking technology.

Next year will see the launch of IMSCAD Cloud, a graphics virtualisation cloud service where firms can pay for virtualised applications, per user, per month. Microsoft and Amazon also cloud services that are suitable for 3D CAD in the offing.

Conclusion

Investing in a centralised workstation solution is not just a case of buying some machines and plugging them in.

Firms need to think beyond CPUs, GPUs, memory and SSDs and consider data centres, enterprise storage and fast network infrastructures. The entire system then needs to be optimised for 3D CAD, new workflows defined and best practice established. Importantly, end users need to be engaged throughout the deployment process and their expectations managed. If not, the project may never get off the ground.

Moving to a centralised workstation environment is a complex IT solution — some say a complete business transformation — that needs careful planning. Some firms do go it alone, but engaging the services of an experienced consultant who has broad experience in workstation virtualisation for CAD is usually money well spent.

While centralised workstations typically appeal more to enterprises, smaller AEC firms can still reap the benefits. Depending on the needs of the firm — security, remote access, collaboration etc — there are various solutions for different budgets and with more ‘pay as you go’ cloud services coming on line, upfront capital costs can also be eliminated, which reduces the risk.

Top tips for deploying CAD in a virtualised environment

1) Don’t go it alone Moving to a virtualised workstation environment is a massive undertaking so call in the experts to help. Engage a consultant with specialist knowledge of both Citrix or VMware and CAD / BIM software — and one that has a proven track record in deploying solutions at AEC firms of a similar size to yours. An experienced CAD / BIM virtualisation specialist should not only know how to spec the virtual workstations and virtual machines but also optimise the operating system, the virtualisation software and the CAD tools themselves, specifically for BIM workflows.

2) Set deadlines Don’t let a Proof of Concept (POC) go on forever or the project may never get off the ground. Dedicate full time CAD users to testing out the technology and set strict objectives and deadlines at every stage.

3) Engage the users No one likes change and CAD users are very attached to their workstations, so moving CAD to a virtualised environment is likely to be a big culture shock. Give users the opportunity to load up their own datasets to demo servers to try things out. Manage expectations, involve the most sceptical users in the (POC), plus educate them in best practices and help them learn how to harness the full capabilities of a virtualised environment.

4) Buy high-spec equipment Don’t scrimp on hardware components. Spec machines out with as much RAM as possible and high-end CPUs. CAD / BIM software loves GHz so make this a priority, but make sure you balance this with plenty of CPU cores to maximise user density.

5) Don’t virtualise everything There may be a temptation to virtualise every bit of software you use, but a mixed deployment of desktop and virtual workstations tends to suit most AEC firms. Keep high-end desktop workstations for power users of 3ds Max Design, for example, and strip out the apps you don’t really need.

Key hardware components for remote workstations

Central Processing Unit (CPU)

Intel

Having lots of CPU cores in a desktop workstation only really benefits users of multithreaded software, such as ray trace rendering or engineering simulation.

However, in rack workstations and servers multiple cores are essential if you wish to get lots of users on a single box. Most machines feature one Intel Xeon E5-1600 v3 CPU or two Intel Xeon E5-2600 v3 CPUs, which have anything up to 18 cores per CPU.

However, choosing the best CPU is not as straightforward as going for the one with the most cores. As the core count increases, the GHz goes down, which impacts the performance of CAD software. Finding the right balance is important.

While the Intel Xeon E5 v3 series CPU has become a mainstay for remote workstations the new Intel Xeon E3-1200 v4 series is already starting to turn heads.

The quad core chip features integrated Intel Iris Pro Graphics P6300 (see below) so does not need a separate Nvidia or AMD GPU to deliver interactive 3D graphics.

Servers like the HP Moonshot have dozens of these CPUs inside, each on its own cartridge. As each cartridge is a self contained workstation in its own right, users are able to connect directly without getting into the realms of virtualisation.

Graphics Processing Unit (GPU)

Nvidia

NvidiaGRID, now in its second generation, is by far the most mature virtual GPU (vGPU) technology. It supports multiple users by giving each user a dedicated slice of the GPU.

The technology comprises software and GPUs and is found in nearly all 3D accelerated VDI workstations and servers. Nvidia’s first GRID-capable GPUs were also called GRID (the K1 and K2) but its latest models (the M6 and M60) have taken on the Tesla brand, which was previously reserved for GPU compute.

As GRID 2.0 and the new Tesla GPUs were only announced in September they are only just starting to find their way into rack servers.

The GRID K1 and K2 and the Tesla M60 are double height PCIe cards so fit into standard rack servers. The Nvidia Tesla M6 is the first vGPU with an MXM form factor, designed specifically for blade servers.

Nvidia says GRID 2.0 offers double the number of concurrent users per GPU or double the application performance compared to the previous generation. vGPU profiles can be assigned to support the different requirements of users. On a single Tesla M60 this can be anywhere from two power users (8GB per user) to 32 entry-level 3D users (512MB per user).

Nvidia’s Quadro desktop workstation GPUs are also available in select rack workstations and servers. These are either used for 1:1 connections, GPU passthrough or shared GPU.

AMD

AMD made its long-awaited entrance to graphics virtualisation in September with the AMD Multiuser GPU, pitched as the world’s first hardware-based virtualised GPU solution. AMD says the GPU is built from the ground up for virtualisation, directly inside the silicon. It is built on SR-IOV (Single Root I/O Virtualisation) technology, a standardised way for devices to expose hardware virtualisation.

AMD says up to 15 users can be supported on a single Multiuser GPU, though this is for entry-level applications. For CAD it will be more like six to ten users and for graphics intensive design applications, two to six users.

AMD Multiuser GPU is expected to ship later this year or early 2016. No specifications have been released yet but we understand it will work in GPU passthrough mode. We imagine there will be multiple variants of the GPU to cater to different types of users and servers.

Meanwhile, AMD’s FirePro desktop GPUs are also available in select rack workstations and servers. These are either used for 1:1 connections, GPU passthrough or shared GPU.

AMD FirePro R5000 offers a unique proposition for remote CAD. It combines Teradici PCoIP hardware and mid-range 3D graphics on a single PCIe board, which means only one motherboard slot is used instead of two, which helps increase density. Up to eight FirePro R5000s can fit in a BOXX XDI V8.

Intel

Intel is starting to ramp up its 3D graphics developments and is positioning its new Intel Iris Pro Graphics P6300 as a serious solution for 3D CAD.

Intel says the GPU, which is packaged with the Intel Xeon E3-1200 v4 CPU, can deliver up to 1.8 times the 3D graphics performance of the previous generation Intel HD graphics P4600.

While raw performance is essential for 3D CAD, Intel will know it still has a job to do to match the years of investment both AMD and Nvidia have in driver development for professional CAD applications. It will need to demonstrate that its graphics technology not only delivers smooth interactive 3D graphics, but also stability and full support for the features on offer in modern CAD applications.

Intel also has its own Graphics Virtualisation Technology (GVT-g) in development, which means the GPU can be shared between concurrent users. Details of this technology are scarce.

Remoting hardware

Teradici

Teradici’s PCoIP (PC-over-IP) technology offers the easiest route to getting remote access to a rack workstation or server. Rather than doing everything in software its PCIe Tera host card sits inside the rack workstation and compresses, encrypts and encodes the display data. It is widely regarded to be the highest performing remote graphics technology.

The downside is density. As rack workstations have a set number of PCIe slots there is a limit to the number of users that can be supported on a single machine.

Teradici hardware technology is typically used for 1:1 connections but also works in GPU passthrough mode, where each physical GPU is matched with a physical Teradici card.

Teradici also has a software-based remoting technology called ‘Workstation Access Software.’ that can be used with desktop workstations or inside the data centre.

The virtual workstation appliance

Dell is looking to take the pain out of workstation virtualisation with its Dell Precision Appliance for Wyse. It comes with the bold claim that firms can get up and running in just five minutes and only need limited virtualisation experience.

The appliance features all the software and hardware needed to deploy a CAD-focused virtual workstation solution. This includes a Dell Precision R7910 rack workstation, Nvidia Quadro or Nvidia GRID GPUs, Teradici PCoIP remote workstation technology and VMware virtualisation software.

The system can support up to three users per appliance in dedicated GPU mode (with three Nvidia Quadro) or four to eight users per appliance in vGPU mode (with two Nvidia GRID).

Users can connect via a diverse set of endpoints including Dell Wyse dual or quad display thin and zero clients, desktops, laptops, or Dell Precision tower and mobile workstations.

Dell says the appliance can be managed without a dedicated IT staff, or by IT staff with limited virtualisation experience. This is sure to appeal SMEs, who often have limited skills in house, but Dell says the appliance is also suitable for larger enterprises.

Rack mounted workstations and servers for remote CAD

There are a whole host of different rack mounted workstations and servers that are capable of serving 3D CAD users remotely

What separates a workstation from server is a bit of a grey area, but workstations can typically support one or many users whereas servers only multiple users. Servers also tend to offer better remote management capabilities. Importantly, both include 3D CAD-class GPUs, which is what differentiates them from traditional data centre servers.

The majority of machines are 1U or 2U, where ‘U’ refers to the unit of height taken up in a standard server rack (44.45 mm). However, there are also 4U, 5U servers and blades, a special type of server architecture that houses multiple server modules (‘blades’) in a chassis.

‘Rack units’ are important as this has a direct impact on density and the number of users that can be supported in a given space. Climate controlled data centres, complete with enterprise storage, optimised network and redundancy, are not cheap.

For smaller firms on a tight budget, it is not unheard of to stick a single rack workstation in a cupboard or under a desk — even if some IT professionals may baulk at the idea. Some desktop workstations — the Fujitsu Celsius R940, for example — can also be configured to support multiple CAD users in a virtualised environment.

HP DL 380z

HP’s 2U rack mount virtual workstation offers all the security and centralised management benefits of the mature HP DL380p server, but it has been optimised for high-end 3D software.

The machine features one or two Intel Xeon E5-2600 v3 CPUs (up to 18 cores each), up to 1.5TB of 2,133 MHz, DDR4 memory and a choice of Nvidia GPUs, including up to two GRID K2s. It can also support up to ten 2.5-inch drives and boasts 10 Gigabit ethernet for a fast connection to shared data centre storage.

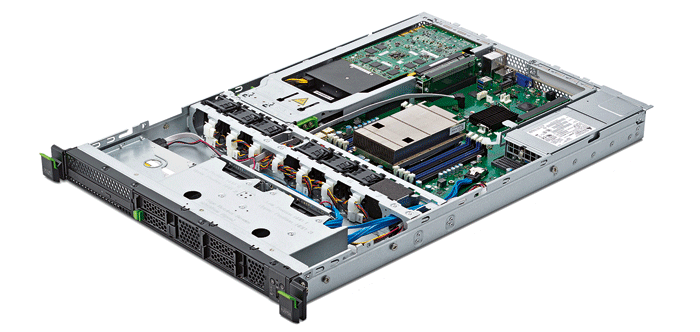

Fujitsu Celsius C740

With a single Intel Xeon E5-2600 v3 (up to 18 cores) and one Nvidia GRID GPU, the Fujitsu Celsius C740 can deliver the same density of users as a typical 2U virtual workstation. However, when used as a dedicated 1:1 remote workstation with Teradici PCoIP hardware, AEC firms can cram in twice as many power CAD users because of its 1U chassis. The machine can support up to 256GB DDR4 (ECC) memory, an M.2 SSD module and up to four 2.5-inch drives.

BOXX XDI V4 / V8

BOXX’s XDI workstations offer an interesting proposition for remote CAD. Instead of vGPU with Nvidia GRID, the machines are optimised for GPU passthrough and have a dedicated AMD FirePro R5000 GPU for each user.

The 1U BOXX XDI V4 can support up to four users, the 2U BOXX XDI V8 up to eight. This density is achieved because the AMD FirePro R5000 has Teradici PCoIP hardware built in, so it does not need the Teradici host cards that would normally take up half the PCIe slots.

Other rack mounted workstations include the Workstation Specialists WS-r and Dell Precision R7910.

HP Moonshot

HP Moonshot is very different to most rack servers, as it essentially plays host to 45 self-contained workstations, each of which are accessed over a 1:1 connection.

The 4.3U Moonshot chassis can accomodated up to 45 HP ProLiant m710p server cartridges, which plug in to the server. Each has its own CPU, memory, storage and network.

Rather than having an add-in Nvidia or AMD GPU, 3D graphics is handled by the Intel Xeon E3-1284L v4 CPU, which includes new generation integrated Intel Iris Pro Graphics P6300.

Each cartridge has up to 32GB RAM, up to 960GB of M.2 storage and dual port 10GbE.

Supermicro offers a similar solution with its Microcloud, which has up to 12 cartridges, each with its own Xeon E3-1284L v4, in a 3U chassis.

For a more traditional 2U server the Lenovo x3650 M5 can support up to two Intel Xeon E5-2600 v3 CPUs, two Nvidia GRID GPUs and up to 1.5 TB of memory. Other Lenovo servers include the Lenovo NeXtScale nx360 M5, a dual Xeon machine that can be coupled with the NeXtScale PCIe 2U Native Expansion Tray to add four Nvidia GRID K2 GPUs.

Other graphics servers include the 2U Fujitsu Primergy CX400 M1, which stands out for its ability to host four Nvidia GRID K2 GPUs, the 2U Dell PowerEdge R720 and the Cisco UCS C240 M4 Rack Server.

For blade, the Cisco UCS B200 M4, which features the new Nvidia Tesla M6 will be available soon.

Supermicro has a massive number of machines that support Nvidia GRID from 1U to 4U servers.

Other manufacturers of Nvidia GRID compatible servers include Gigabyte, SGI and Tyan. There are many more from Dell, HP, Cisco, and Lenovo.

The virtualisation software stack

Centralised workstation deployments can require a complex stack of software Here are the basics, focusing on the two main providers, Citrix and VMware.

Remote Access (1:1)

With a 1:1 connection the software requirements are relatively simple. All you need is some broking software, such as VMware View, to dynamically manage the pool of remote workstations.

GPU pass through

For GPU pass through you will need hypervisor software, which is designed specifically to create and run virtual machines (VMs). This is available in VMware vSphere 5.5 and Citrix XenServer 6.02 SP1. VMware Horizon or Citrix XenDesktop are also needed to create the virtual desktops.

Virtual GPU (vGPU)

For virtual GPU (vGPU) with Nvidia GRID the requirements are very similar, though you will need later versions of the software: VMware vSphere 6.0 and Citrix XenServer 6.2 SP1 of above.

Client Software

Client side, you will need VMware Horizon Client or Citrix Receiver, which are both available for OS X, Windows, Linux, iOS & Android. Alternatively, use a thin client that supports the HDX 3D Pro protocol (for Citrix) or PCoIP (for VMware).

The future

Microsoft will also become more relevant to remote graphics for CAD and BIM software next year when it delivers OpenGL 4.4 support in RemoteFX vGPU in Windows Server 2016. OpenGL is the 3D graphics API used by most 3D design software.

Build yourself a remote workstation

So you want to use your high-performance workstation from home, on site or at a client’s office, but do not want to invest in a formal data centre solution? Do not worry; it is actually relatively easy to turn any desktop machine into a remote workstation — though in many of the following examples you will need a Virtual Private Network (VPN) in place if you want to use your machine outside of LAN.

Screen sharing technologies like GoToMyPc or Skype can do a job but are optimised for office applications rather than 3D CAD so the experience can be poor.

For the best 3D CAD performance use hardware-based PCoIP. Simply buy a Teradici host card, which you stick inside your workstation, coupled with a PCoIP hardware or software client at the other end to connect.

Teradici also offers the reliably named ‘Workstation Access Software’, which uses the PCoIP protocol to add remote access capability to your 3D CAD desktop workstation. It is available for $199 from Dell and BOXX, and other sellers. See our review on page 26.

However, getting remote capabilities for your workstation does not have to cost you any money. HP Z Workstations come free with HP RGS (Remote Graphics Software) a remote desktop protocol specifically designed for 3D graphics.

This is one of a series of articles on workstation virtualisation and related technologies, which appeared in AEC Magazine’s workstation virtualisation special edition. Click the links below to read all the other stories.

Global flexibility: Local sustainability

IMSCAD: BIM in virtualised environments

REVIEW: Teradici Workstation Access software

Collaborating with Revit over WAN

If you enjoyed this article, subscribe to AEC Magazine for FREE